.jpeg)

Why "AI-First" Design Means Two Completely Different Things

I have now written two articles about designing for AI, and they are about completely different things. That is the problem this piece exists to name.

In one article, I wrote about what it feels like to live inside an AI-coordinated environment. The room that is already right when you walk into it. The trust threshold. The measurement question of how you evaluate something that works by disappearing. That is designing for humans experiencing AI.

In the other, I wrote about what happens when AI is the entity encountering a system. The checkout example. Fanout. Retry spirals. A single agent unintentionally producing the load profile of a DDoS attack because the system it interacted with assumed a human would get embarrassed and stop trying. That is designing for AI as the user.

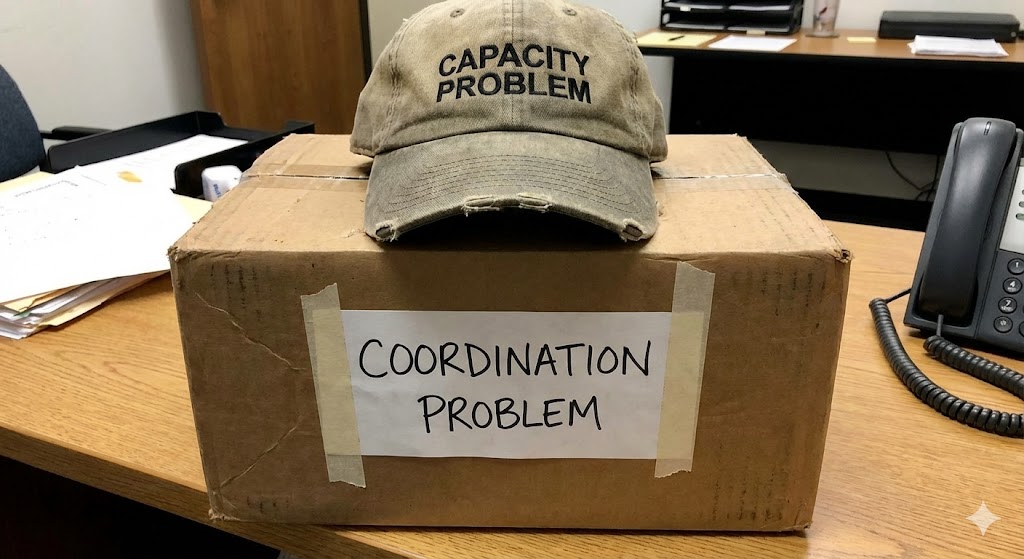

Both of these get called "AI-first design." They are not the same problem. They do not share assumptions, failure modes, success metrics, or architectural requirements. And the industry's habit of treating them as one conversation is producing some expensive mistakes.

Where They Overlap

I want to be fair about what these two problems share, because they do share something important, and it is the reason people conflate them.

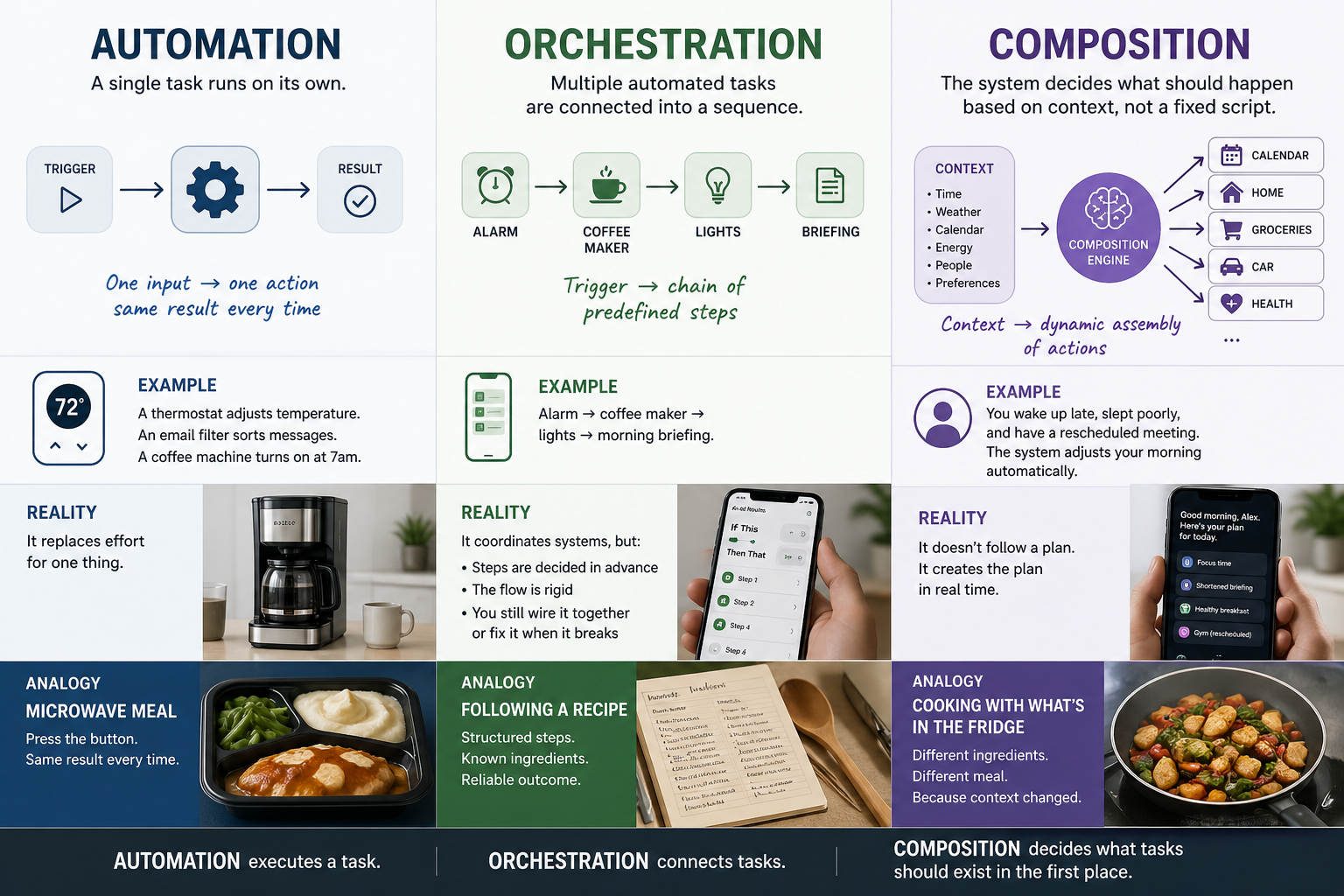

Both require abandoning the assumption that a human is directly interacting with a screen. Traditional UX assumes a person sees a button, presses it, receives a response. Both AI-native design (humans in AI environments) and AI-as-user design (AI encountering systems) break that assumption. In one case, the human is not pressing buttons because the environment is handling things. In the other case, there is no human at the interface at all.

Both also require new measurement frameworks. I wrote about deviation-based measurement for AI-native experiences, tracking how often the system requires correction rather than how often it is used. For AI-as-user design, the measurement problem is different but equally unresolved: how do you measure the health of a system when the entities interacting with it produce load patterns that were never part of the original design assumptions?

And both expose the limitations of walled gardens. AI-native experiences need cross-ecosystem coordination because the environment is not one company's products. AI-as-user systems need open architecture because agents do not respect ecosystem boundaries any more than they respect checkout line etiquette.

Those overlaps are real. They are also where the similarities end.

Where They Diverge

Here is where it matters.

What the "user" values. A human in an AI-native environment values narrative, aesthetic, emotional grounding, the feeling of presence. I wrote in the tree article about how people need to see themselves in processes conducted on their behalf. AI as a user values none of those things. An agent values speed, determinism, consistent structure, and predictable failure modes. Designing an experience that satisfies both simultaneously is not a compromise. It is two different products.

How failure scales. When an AI-native experience fails for a human, one person has a bad moment. Their room is too cold. Their morning routine misfires. Trust erodes for that individual. When an AI-as-user system fails, the failure is not individual. It is combinatorial. One agent retrying across 1,000 surfaces does not produce one bad moment. It produces a systemic event. The blast radius of failure in AI-native design is personal. The blast radius in AI-as-user design is infrastructural.

What "trust" means. For a human in an AI-native experience, trust is emotional. It is the feeling that the system reflects your values and anticipates your needs. For AI as a user, trust is mechanical. It is the predictability of the response surface. Does the system behave consistently? Does it fail gracefully? Does it communicate its state in a way the agent can parse? These are not emotional questions. They are protocol questions. And designing for emotional trust and mechanical trust produces different architectures.

What "invisible" means. In AI-native design, the ideal is invisible AI. The system works and nobody notices. In AI-as-user design, invisibility is the problem. If the system is invisible to the agent, the agent cannot read its state, predict its behavior, or adapt its approach. AI-as-user design requires legibility, not to humans, but to other machines. The experience needs to be readable in a way that has nothing to do with visual interfaces and everything to do with behavioral signals, timing, structure, and response patterns.

I saw this divergence play out in miniature when I connected a Muse S Athena brain-computer interface to Tethral. The BCI did not interact with the coordination layer the way a voice command does. It produced different timing patterns, different signal structures, different feedback expectations. In that moment I was the human user having an AI-native experience, the room responding to my intent. But the BCI signal was behaving more like an agent than a person: arriving as raw data, without semantics, requiring the system to read it on its own terms rather than through a human-shaped interface. Both design problems were present in the same interaction. And the system had to serve both simultaneously.

Why This Distinction Matters Right Now

Agentic commerce is projected to reach trillions of dollars within the next five years. The infrastructure being built for it is largely designed on human UX assumptions. Login flows, checkout sequences, confirmation screens, error messages written in English for a person to read. These systems work for humans. They break when AI is the user, not because the AI is incompetent but because the system's assumptions about who would use it were baked in at the architectural level.

At the same time, AI-native experiences for humans are being built on the assumption that you can ship a voice assistant with more skills and call it ambient intelligence. That is not ambient intelligence. That is a menu with a microphone. The room that is already right when you walk into it requires coordination infrastructure that no smart speaker company has built or has an incentive to build.

Both of these failures stem from the same root cause: the industry is using one phrase for two problems and solving neither well because the design requirements conflict. A system optimized for human emotional trust is not optimized for machine-speed parallel interaction. A system optimized for agent throughput is not optimized for the feeling of competence that is not yours. Trying to build one system that does both is how you get products that are mediocre for humans and fragile for agents.

What Separating Them Unlocks

Once you separate these problems, the design space opens up in both directions.

For AI-native human experiences, you can design without worrying about agent load patterns. The coordination layer handles the environment. The experience layer handles the human. Deviation measurement makes sense. The trust threshold makes sense. The invisible interface makes sense. You are designing for one species of user, and you can optimize for what that species values.

For AI-as-user systems, you can design for machine behavior without pretending agents have feelings. Consistent response surfaces. Predictable failure modes. Behavioral legibility. Signals that communicate system state without requiring semantic interpretation. This is where the work I started at Cornell, following the signal, becomes directly applicable. The signals have their own behavior. Their own patterns. Designing for those patterns instead of forcing them through human-shaped interaction flows is not just more efficient. It is the only approach that scales.

And at Tethral, this separation is the architecture. The coordination layer handles machine-to-machine orchestration using behavioral signals, timing, frequency, response patterns, without requiring human semantics. The experience layer handles the human side, translating coordinated outcomes into environments that feel right. Two layers. Two problems. One platform that does not confuse them.

No incumbent has separated these problems because no incumbent's architecture was designed for both. The smart home companies designed for humans pressing buttons. The agentic commerce companies designed for AI hitting APIs. Neither designed for the space where both exist in the same environment, which is every physical space where AI coordinates devices on behalf of people who also live there.

That is the space Lifestyle AI occupies. And it starts with recognizing that "AI-first" is two problems wearing the same name.

This is part of an ongoing series on the foundational design principles behind Lifestyle AI.

RELATED POSTS

.jpg)