.jpeg)

What Happens When AI Is the User?

Following the Signal, in the Age of Agentic AI

I remember back in 2016, when I first started my PhD in communication at Cornell, I proposed a version of my dissertation. I still remember the name. It was called "Following the Signal."

I was fascinated, and I still am, by the life of the signals that move around the spaces we inhabit, both physical and digital. It was not a question of agency, at least not yet. It was a question of understanding how the nature of activity for those signals is different from the activities we have assigned them to produce, rendered in some beautiful format for people to consume through user interfaces and user experience. The signal has its own behavior. Its own patterns. Its own way of moving through a system that has nothing to do with the dashboard it eventually appears on.

The response at the time was that this was too esoteric and not enough of a focus on the human work underneath the material activity. A better version of the project, I was told, was to care about the activity of the people and the mutual, even recursive, way that signals and technologies more broadly develop and are developed by the people who experience them. That was the project I pursued. It was a good project, and I don't regret it.

But the question remained. And in a world now co-inhabited by agentic AI, it was perhaps ahead of its time.

Post-PhD and building my own orchestration platform, I have had the opportunity not just to follow the signal, but to discern how these signals natively communicate when they do not need the semantics we assign them. How they conceptualize each other. How they respond to their surroundings and have those surroundings respond to them.

I can tell you after a year of work with some certainty: those signals are observable. And one outcome of that work is a simple message that has become the foundation of everything we are building at Tethral. What should interaction look like if we are designing processes not just for people, but for AI that places value, appeal, responsiveness, and activity on very different concerns?

The Checkout Line

Let me make this concrete, because the abstraction only gets you so far.

Think about checkout. The experience most of us have, whether in a physical store or online, is modeled after how we do it in physical spaces. You walk up to a register. You present your items. You administer your credentials. You add a method of payment. You leave with your goods. That is you at a supermarket in the express lane, and it is you on Amazon, Stripe, or Google Pay. The digital version is faster but structurally identical. One person, one register, one transaction.

Now. You are in that express lane. You have your card ready. You are prepared to move through quickly. And the person in front of you opens a checkbook.

No matter how prepared you are, no matter how advanced the checkout system, no matter how efficient the register, the checkbook slows you down. It slows the checkout. It slows everyone behind you. That is friction. It is annoying. And it is bounded. One lane. Maybe the checkbook person tried a credit card first and it did not work, tried it again, tried a different one, then went for the checkbook. Three or four attempts. The failure is limited to one queue, one moment, one small cluster of inconvenienced people.

That is the human experience of checkout friction. It is linear. It is bounded. And critically, it is self-limiting. The person with the checkbook feels the social pressure of the line behind them. They get embarrassed. They apologize. They move through as quickly as they can. Social embarrassment is, in a real sense, a rate limiter on human checkout failure.

What Changes When AI Is the One Checking Out

Agentic commerce is here and growing. AI agents are already making purchases, booking services, comparing prices, and executing transactions on behalf of people and businesses. McKinsey projects $3 to $5 trillion in agent-mediated commerce by 2030. And most of the systems they are interacting with were designed for the checkout experience I just described. One person. One register. One bounded failure at a time.

Here is where it gets interesting, and for my fellow security folks, you might sense what is coming.

Each agent, even one that is not a malicious actor, has the capability to act as its own micro-DDoS attack. And here is why. Unlike humans, agents can interact across multiple surfaces simultaneously. That is how they do parallel tasks. It is not a bug. It is the feature. Which also means a single agent can be trying 100 checkout lanes at once. Or 1,000. That is fanout.

And unlike humans, agents do not get embarrassed after trying three times with a declined credit card. An agent does not feel the social pressure of the line behind it. It does not apologize. It does not switch to the checkbook and move on sheepishly. Instead, an agent may retry 100 times. Or 1,000. That is a retry spiral.

Across 1,000 surfaces, 1,000 times, one agent has the ability to interact with a classic checkout system in a potentially devastating way. Not because it intended to. Because the system it encountered was designed around assumptions that do not apply to it. Linear flow. Bounded failure. Social embarrassment as a rate limiter. None of those exist for an agent.

Now ask what happens with 1,000 agents. Or a million. Interacting every minute or more, as agentic commerce is projected to reach. The system that works perfectly for humans becomes an attack surface for agents, not through malice but through the fundamental mismatch between how the experience was designed and what is now experiencing it.

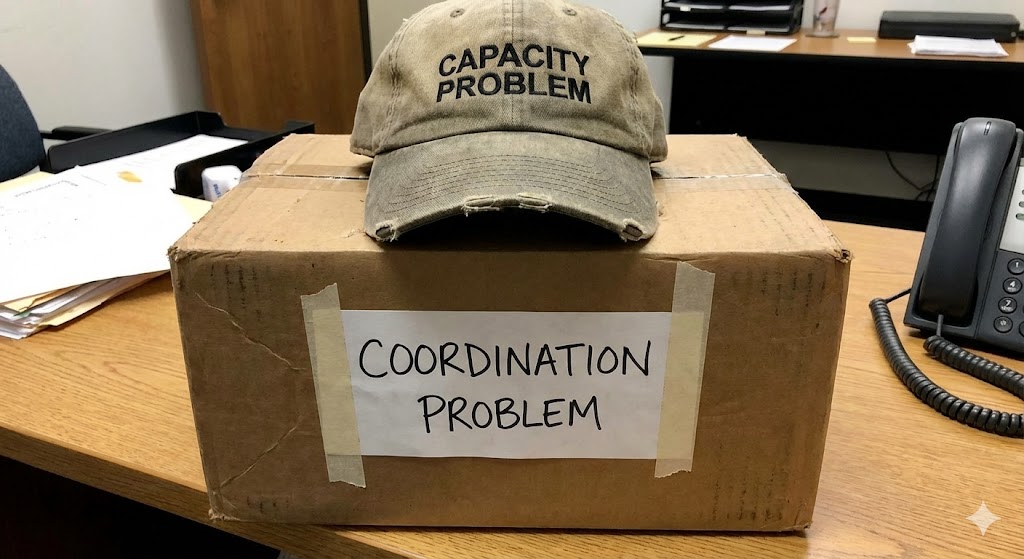

The Design Problem Nobody Framed Correctly

This is not a security problem in the traditional sense. It is not about bad actors exploiting vulnerabilities. It is about well-intentioned agents producing catastrophic load patterns because every assumption baked into the systems they touch, sequential interaction, bounded retries, one transaction per entity per moment, was invisible when humans were the only users. Humans never violated those assumptions. Agents violate all of them by default.

And no amount of rate limiting on the agent side solves the problem, because the surface the agent is interacting with was never designed for the load profile agents produce. You can throttle an individual agent, but you cannot throttle a million agents each operating within their individual limits while collectively overwhelming a system designed for human-speed, human-bounded, human-embarrassed interaction patterns.

This is what I was reaching toward in 2016 with "Following the Signal." The signal has its own life. Its own patterns. Its own way of moving through a system. When we designed systems for humans, we could ignore the signal's native behavior because the human behavior was the only behavior that mattered. Now there are other entities in the system, entities that interact with it at machine speed, machine scale, and machine persistence. The signal's behavior is no longer a theoretical curiosity. It is an engineering constraint.

What Designing for AI as the User Requires

Designing for people experiencing AI-coordinated environments is one set of questions. I have written about that elsewhere in this series, the invisible interface, deviation measurement, the trust threshold. Those are important.

Designing systems robust enough for AI to be the first one experiencing them is a different set of questions entirely. It takes more than design thinking. It takes a reworking of how we conceptualize what experience should be and what "experience" even means when the entity having it does not think, feel, or get embarrassed like we do.

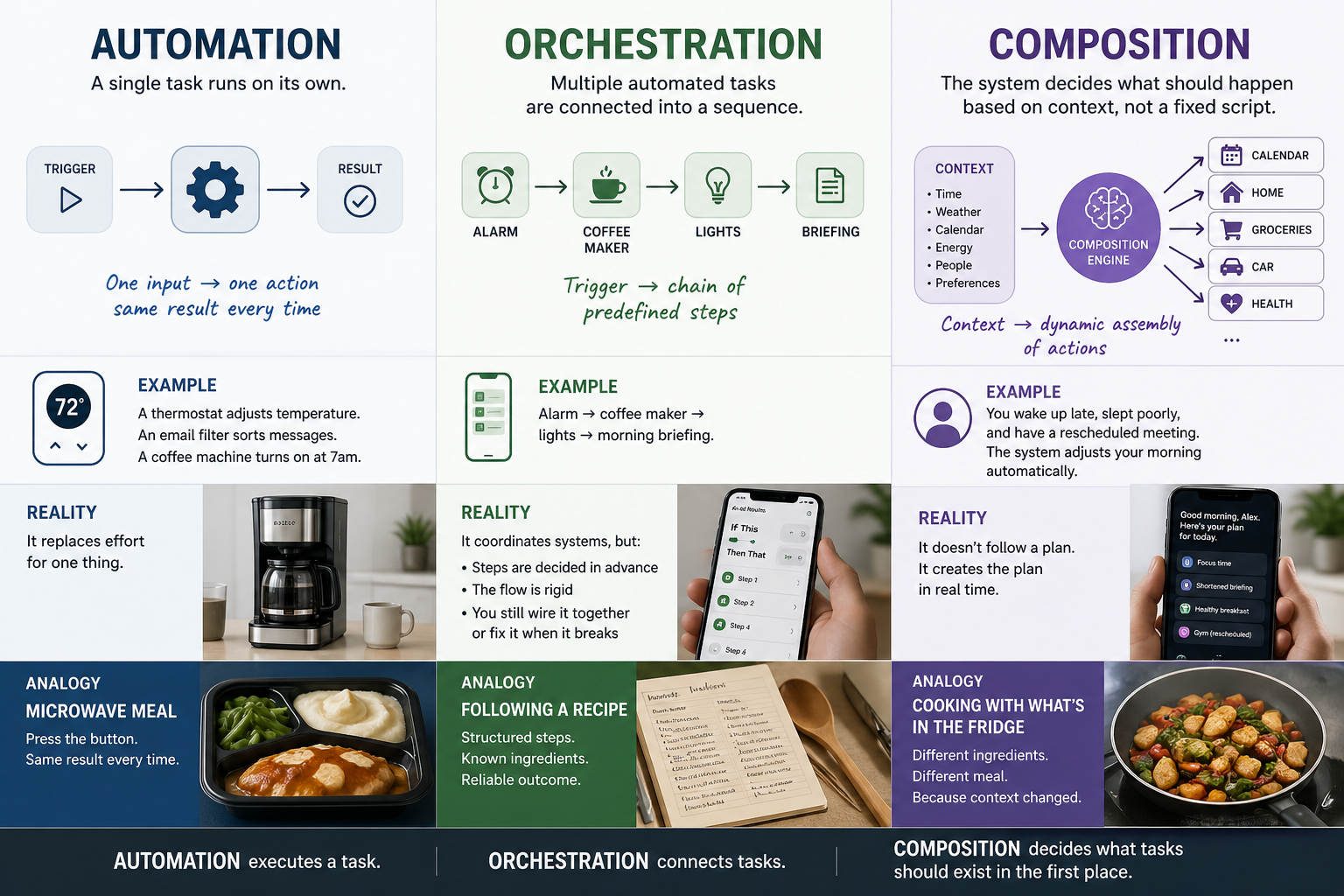

What attracts AI to a system is not what attracts us. What constitutes responsiveness for an agent is not what constitutes responsiveness for a person. What an agent values in an interaction, speed, determinism, consistent structure, predictable failure modes, is different from what a person values, which includes narrative, aesthetic, emotional grounding, and the feeling of presence I wrote about in the tree article.

Those differences are not minor variations on the same theme. They are fundamentally different design requirements that produce fundamentally different architectures. And right now, the entire agentic commerce infrastructure is being built on the assumption that you can run AI through human-designed experiences and it will work. It will not. Not because the AI is bad. Because the experience was built for someone else.

The checkout example is just the most legible version of a problem that exists across every surface agents touch. And the industry is building toward trillions of dollars in agent-mediated transactions on infrastructure that treats the million-agent parallel retry problem as someone else's concern.

It is not someone else's concern. It is the design problem of the next decade. And it starts with a question that sounded too esoteric in 2016: what are the signals doing when we are not watching?

This is part of an ongoing series on the foundational design principles behind Lifestyle AI.

RELATED POSTS

.jpg)