.jpeg)

Behavior, Not Content: Why the Future of AI Infrastructure Is Pre-Semantic

We have spent the better part of a decade and hundreds of billions of dollars teaching machines to understand language. The models are extraordinary. They reason, they retrieve, they generate. And yet the production systems built on top of them are failing in ways that have almost nothing to do with understanding.

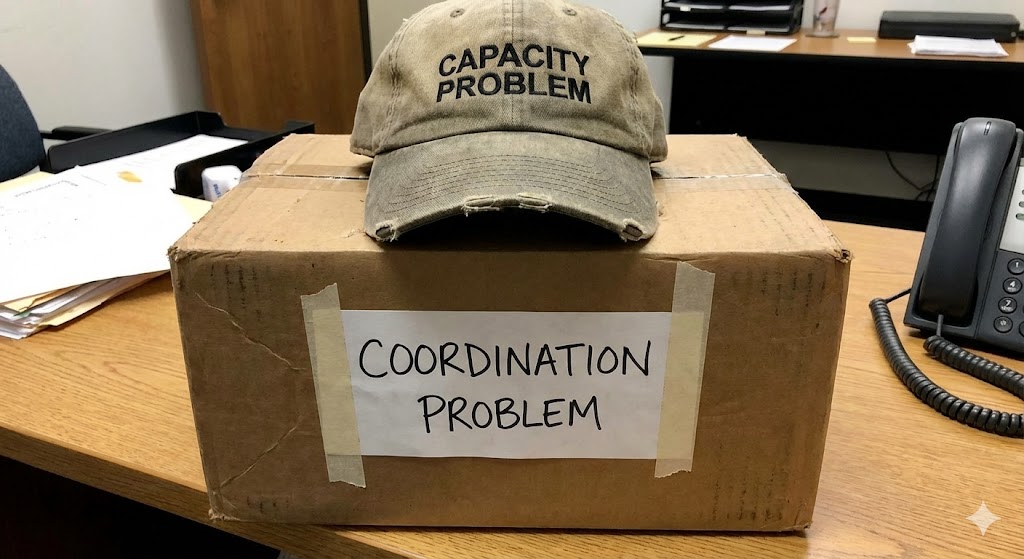

The failures are structural. Retries cascade into more retries. Partial completions trigger expensive human escalation. Late aborts burn tokens on work that was doomed three steps earlier. Entire workflows thrash, restart, and thrash again. If you talk to the teams running these systems, the complaint is never "the model isn't smart enough." The complaint is that the pieces don't coordinate. The orchestra has world-class musicians and no conductor.

This should concern anyone paying attention to the trajectory of AI investment. The industry has poured its resources into the semantic layer, into making individual models more capable, while treating coordination as an afterthought. Something to be solved with retries and logging and the occasional human in the loop. That bet made sense when AI systems were tools. It stops making sense the moment they become participants. And we crossed that line some time ago.

The coordination problem is not a model problem

There is a seductive logic to the idea that better models will solve coordination failures. If the components are smarter, the system will be smarter. This is roughly as true as saying that hiring better employees eliminates the need for management. It confuses the intelligence of parts with the behavior of wholes.

Agentic AI systems, the kind now being deployed across finance, logistics, enterprise automation, and government, involve multiple models and tools interacting in sequences that no single designer fully controls. The failure modes that matter are not semantic failures. They are interaction failures. A perfectly accurate model that fires at the wrong time, in the wrong sequence, or in response to an already-failed upstream call does not produce a perfectly accurate outcome. It produces expensive waste.

The infrastructure to manage these interactions does not exist in any serious form. What exists instead is observability tooling, which is forensic by nature. It tells you what went wrong after the damage is done. It gives you the postmortem. It does not give you the prevention. The distinction matters enormously, and the industry has been surprisingly slow to recognize it.

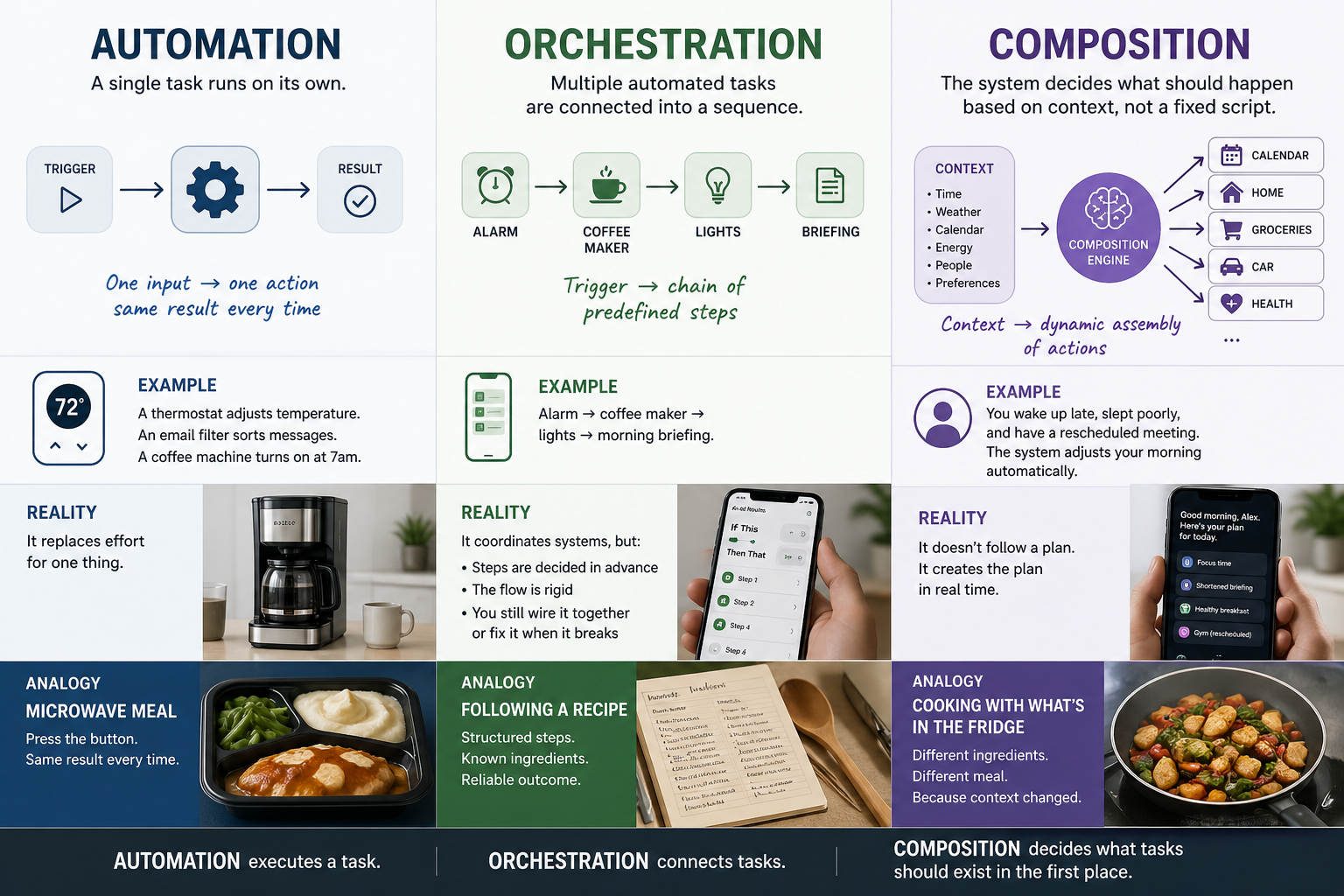

Three layers, and the one we skipped

It is useful to think about internet infrastructure in eras, not because the history is tidy but because the pattern is instructive.

The first era was delivery. Content delivery networks made web content fast and globally available without understanding anything about what the content said. Nobody expected Akamai to interpret your web pages. The entire value proposition was that it did not need to.

The second era, the one we are still living in, is understanding. Large language models, vector databases, retrieval systems. All aimed at making content usable, interactive, and intelligent. This is where most of the money and attention remain.

The third era is coordination. When AI systems become agentic, when they act and interact rather than merely respond, the interaction itself becomes both the dominant cost and the dominant risk. Not the intelligence of any individual call, but the behavior of the system across calls. We have built almost nothing for this layer. We have, instead, asked semantic tools to do a job they were never designed for, and we are paying for that choice in ways that show up clearly on the invoice but remain strangely absent from the strategic conversation.

Content-blindness is not a feature. It is a requirement.

There is a deeper architectural issue that deserves more attention than it gets.

If your coordination layer needs to read prompts and responses in order to coordinate, you have made a choice with consequences that compound in every direction. You inherit every compliance regime, every data residency requirement, every competitive sensitivity, and every legal exposure that comes with touching content. In multi-tenant environments, this is a serious problem. In cross-organizational deployments, where the real scale lives, it is disqualifying. No two companies will agree to route their prompts through shared infrastructure that inspects what they say.

Coordination that operates on behavioral signals, on timing, frequency, sequence, and fanout patterns rather than on content, sidesteps this entirely. Not as a privacy courtesy, but as the only architecture that functions at the boundaries where coordination matters most: across tenants, across organizations, across trust domains. The systems that need coordination the most are precisely the ones where content inspection is least acceptable. This is not a coincidence. It is the design constraint that determines what is buildable at scale.

Who pays the coordination tax first

Coordination cost is not evenly distributed. Hyperscalers can absorb inefficiency for years, and they have structural incentives to integrate new control layers only after someone else has proven the category. This is not a criticism. It is a description of how platform economics work. The companies that build the cloud are rarely the first to build the layers that make the cloud usable.

The pain concentrates among operators who own downstream consequences. Workflow orchestrators managing complex multi-step automations. AI gateway operators who can see the traffic patterns and the waste in their logs. Fintech platforms where a retry cascade has direct financial exposure. Enterprise teams building agent systems where reliability is a contractual obligation, not an aspiration.

These teams are watching their inference spend climb while their model performance technically improves. They can see the gap between what the models can do and what the systems actually deliver, and they are looking for the layer that closes it. Most of them have not yet found a name for what is missing.

The measurement tends to end the debate

For teams evaluating whether this matters for their stack, the most productive thing to do is measure it. Pick one production workflow where you suspect coordination waste is present. Count the retries. Track the token burn on work that was ultimately discarded. Map how partial failures propagate through the system. Look at how agent fanout amplifies a single bad call into a cascade of wasted compute.

The savings come in two forms. The first is direct: tokens burned on inference that could have been prevented, rerouted, or terminated earlier. The second is systemic. Cascading retries. Workflow thrash. The expanded blast radius during partial outages that turns a small problem into an expensive one. Most teams discover the scale of the problem the first time they take it seriously. It is rarely small.

This layer is coming. The coordination problem does not resolve itself as models improve, any more than network congestion resolved itself as bandwidth increased. The question for infrastructure buyers is whether they build on it early or spend the next two years retrofitting it into systems that were designed without it.

RELATED POSTS

.jpg)