.jpeg)

Machines Are Relational. We Just Pretend They Aren't.

There is a convenient fiction at the heart of how we talk about technology. The fiction is that machines are neutral. That a system processes inputs, applies logic, and produces outputs, and that none of this is shaped by anything other than the explicit instructions it was given. The machine has no perspective. It has no orientation. It arrives at each interaction fresh, unburdened by context, carrying nothing of its own into the exchange.

This is wrong. And the fact that it is wrong has consequences that ripple through every system we build.

Situatedness is not a human monopoly

There is a concept in philosophy called situatedness. It describes the way a knower is always embedded in a particular context, a particular position, a particular set of conditions that shape what they can perceive and how they interpret it. The idea is usually applied to humans. You do not experience the world from nowhere. You experience it from a body, in a place, with a history of prior experiences that have taught you what your sensory inputs could mean, should mean, or might mean. Your perception is not raw data processing. It is interpretation, shaped by everything you have already encountered.

The conventional view is that machines are the opposite of this. They are the view from nowhere. They process without interpreting. They compute without context. This is the foundational assumption behind most of the language we use to describe software: it executes, it follows instructions, it implements specifications. All of these words position the machine as a passive conduit between human intention and computational output.

But spend any time building systems that interact with each other, that coordinate across environments and dependencies and organizational boundaries, and this assumption starts to fall apart. The machine is not arriving from nowhere. It is arriving from somewhere very specific. It was built in a particular context, with particular libraries, particular training data, particular architectural assumptions, particular failure modes baked into its design. It carries all of that into every interaction. Not as memory in the human sense, but as structural orientation. The machine relates to the world through the conditions of its making, the same way a human relates to the world through the conditions of their experience.

What a machine brings to the room

Consider something as simple as a timeout policy. An engineer sets a timeout at 30 seconds based on the expected performance of a dependency in the environment where the system was developed and tested. That timeout is not a neutral parameter. It is an expectation about the world. It encodes an assumption about how fast things should be, what constitutes degradation, and when to give up. When that system is deployed into a different environment, with different latencies, different load patterns, different failure characteristics, the timeout does not adapt. It continues to enforce the worldview of the context in which it was created.

This is a trivial example. The non-trivial version is everything.

A retry policy encodes a theory about failure: that failures are transient, that the same request is worth attempting again, that persistence is more valuable than restraint. A routing algorithm encodes a theory about optimization: what should be fast, what can wait, what matters. A model's training data encodes an entire epistemology: what counts as knowledge, what patterns are meaningful, what correlations are worth preserving. None of these are neutral. All of them are situated.

The machine does not know it has a perspective. That is the difference between machine situatedness and human situatedness. A human can, at least in principle, reflect on their own positionality and adjust for it. A machine cannot. But the absence of self-awareness does not mean the absence of orientation. It just means the orientation operates without examination, which, if you think about it for a moment, describes quite a lot of human behavior as well.

Why this matters for coordination

The practical consequence becomes visible the moment you have multiple machines interacting. Each one brings its own situatedness to the exchange. Its own timeout assumptions. Its own retry theories. Its own optimization priorities. Its own implicit model of what the world looks like and how it should behave.

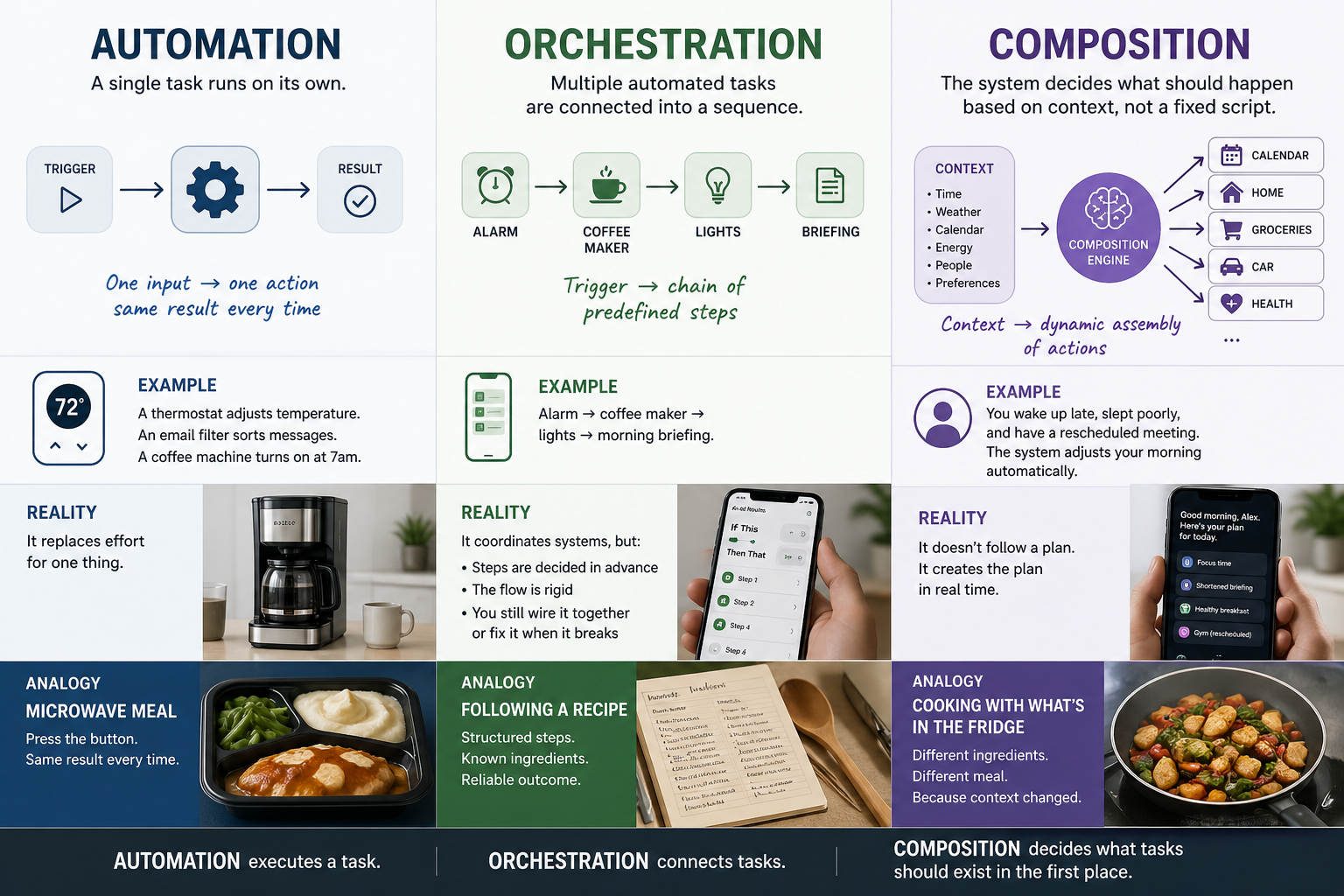

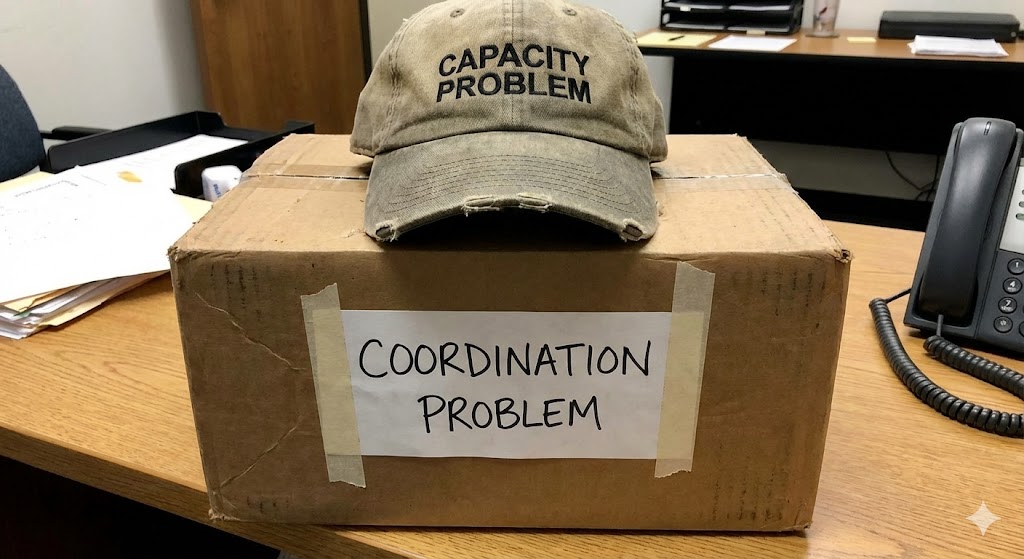

When these orientations are compatible, the system works. When they are not, the system fails in ways that are difficult to diagnose precisely because we do not have a framework for talking about machine disagreement. We call it a bug, or a configuration error, or an integration issue. We rarely call it what it is: a conflict between two situated perspectives that were each reasonable in their own context but are incompatible in the shared environment where they now operate.

This is not an abstract philosophical point. It is a description of why multi-agent systems fail. Each agent was built in a particular context, trained on particular data, designed with particular assumptions about the world it would operate in. When those agents are composed into a system, their situatedness does not disappear. It compounds. And the coordination failures that result are not failures of logic or capability. They are failures of relationship. The machines are relating to the world through incompatible frames, and nothing in the architecture is mediating between those frames.

The thing we are actually building

If machines are relational, if they carry the context of their making into every interaction, then the behavioral signals they produce are not noise. They are expression. The timing of a response, the pattern of retries, the shape of fanout, the rhythm of interaction, all of these carry information about the machine's situated orientation, about the assumptions it is operating under, about the worldview encoded in its design.

Reading those signals is not monitoring. It is listening. And coordinating based on those signals is not traffic management. It is mediation between situated perspectives that cannot mediate for themselves.

This is, ultimately, what a pre-semantic coordination layer does. It does not read the content of interactions because the content is not where the relational information lives. The relational information lives in the behavior, in the patterns of interaction that reveal what each machine expects, what each machine assumes, and where those expectations and assumptions are about to collide.

The fiction that machines are neutral has been useful. It has made engineering legible, made specifications tractable, made the whole enterprise of building systems feel like a problem of logic rather than a problem of relationship. But it is still a fiction. And the systems we are building now, agentic, autonomous, composed across organizational boundaries, are large enough and complex enough that the fiction is starting to cost us more than it saves.

The machines are relational. The question is whether we build infrastructure that acknowledges that, or continue to be surprised when situated perspectives collide and nobody built anything to mediate between them.

John Lunsford is the CEO and founder of Tethral, and the inventor of TriST, a geometric coordination protocol for agentic AI systems. His PhD research at MIT and Oxford focused on autonomous system-to-society adoption. He writes about the intersection of machine behavior, coordination, and infrastructure.

RELATED POSTS

.jpg)