.jpeg)

What Does It Look Like to Biohack Your Home?

I wear a Nuro ring, an Apple Watch, and sometimes a headband that scans my brainwaves. Between the three of them, my body is generating a continuous stream of data about my sleep, my heart rate, my stress levels, my recovery, and my neurological state. I know more about what my body is doing at any given moment than any generation of humans before me.

Here is what frustrates me about it. My body is broadcasting. My home is not listening.

My ring knows I slept poorly last night. My lighting system does not care. My Apple Watch knows my heart rate variability has been elevated all morning. My thermostat has no idea. My headband knows when I am focused and when I am drifting. My environment does nothing with that information. My calendar knows I have a dinner meeting in two hours. My kitchen does not know that either. Every one of these devices generates data that could meaningfully shape the environment around me, and instead it goes to a dashboard I check later, after the moment where it could have been useful has already passed.

That gap, between what your body knows and what your environment does with it, is one of the most interesting unsolved problems in connected technology. And almost nobody is working on it.

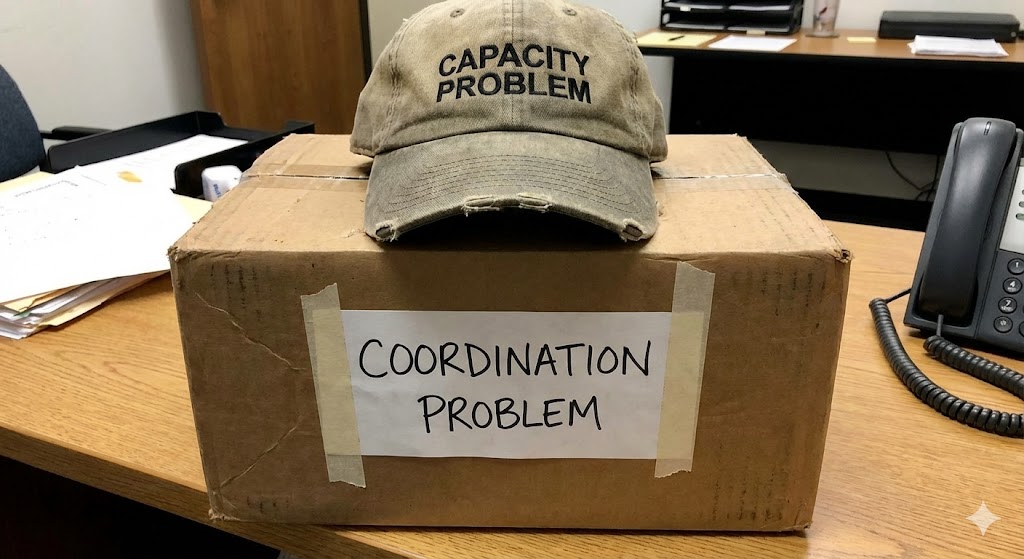

The Data Exists. The Coordination Does Not.

This is not a sensor problem. The sensors are extraordinary. Wearables like Whoop and Oura track heart rate variability, sleep staging, respiratory rate, skin temperature, and recovery scores. CGMs track metabolic response in five-minute intervals. Even a basic Apple Watch generates a continuous stream of biometric context about the person wearing it.

All of that data is real, it is granular, and it is generated in real time. And virtually all of it terminates in an app. A graph you look at later. A score you check in the morning. A notification that tells you what your body was doing an hour ago.

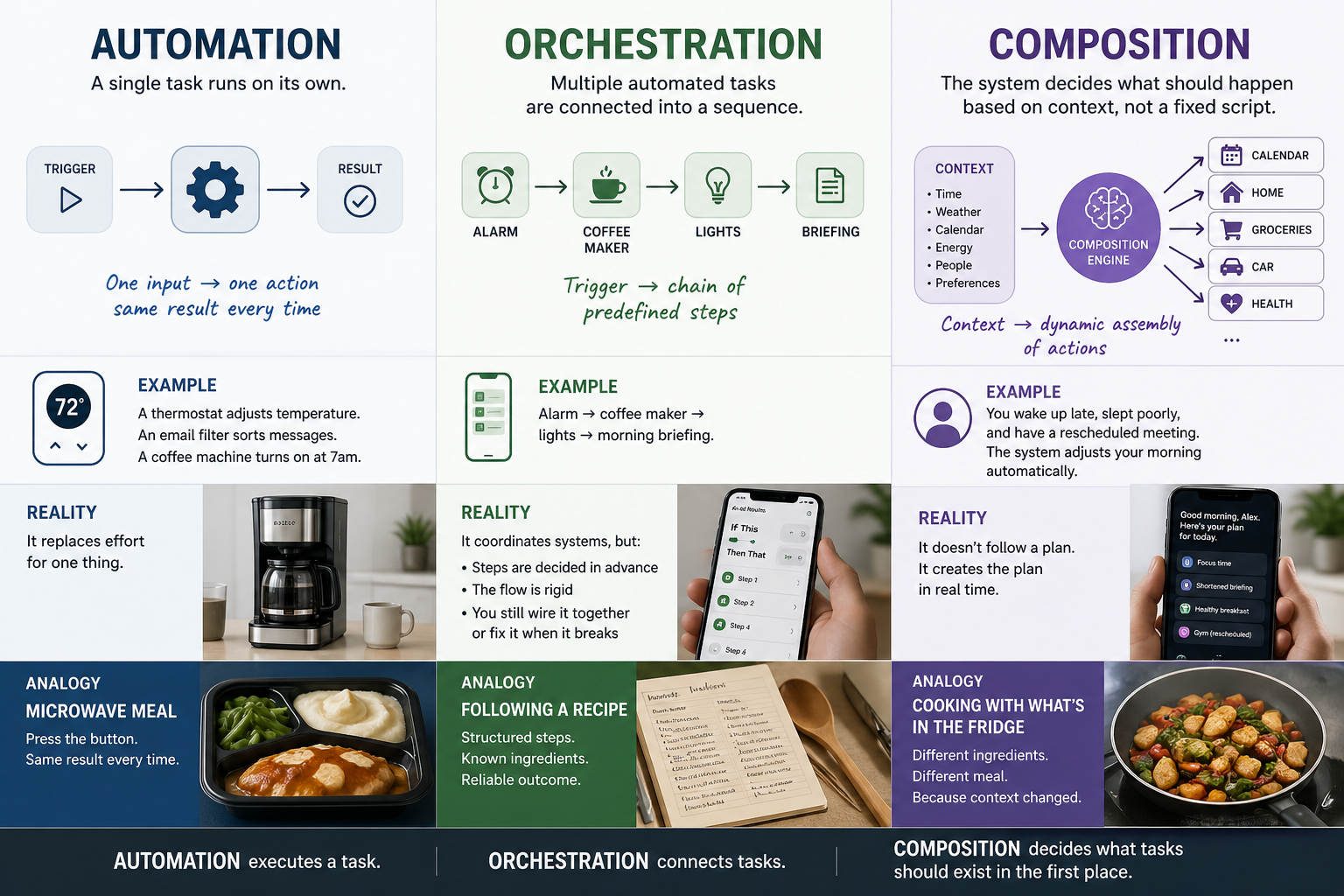

The missing piece is the coordination layer that would let your environment respond to what your body is doing right now. Not on a schedule someone programmed. Not through a voice command you had to think to say. Through the biological signals your body is already producing, routed to the physical environment around you in a way that serves you without requiring you to manage it.

I wrote in an earlier piece about the design challenge of AI-native experiences, that the scariest question is how you measure something that works by disappearing. This is the frontier case of that problem. If your circadian data adjusted your lighting automatically, not on a timer but on your biology, would you notice? Or would you just feel better and not know why?

What This Would Look Like

I want to be careful here. I am describing a frontier, not a finished product. Some of this is possible today with enough duct tape. Most of it requires a coordination layer that does not exist in the current connected technology landscape. But the components are all real, and the gap between them is engineering, not science fiction.

Your sleep data arrives before you wake up. The bedroom lighting shifts gradually based on your sleep staging, not an alarm time. The temperature adjusts to match your body's recovery state. By the time you open your eyes, the room has already responded to how you slept, not to a schedule someone set last week.

Your heart rate variability indicates elevated stress during a workday. The environment responds: lighting warms slightly, notifications quiet, ambient sound shifts. You did not ask for it. You did not even notice the stress building. But the environment read your body before your conscious mind caught up, and it adjusted.

Your glucose is dropping an hour before dinner. The kitchen environment shifts to support meal preparation. Not cooking for you. Signaling that it is time, adjusting the space, surfacing the context you need. The dish analogy from my earlier article about agent design applies here: it is not about the technology doing everything. It is about the technology participating in the rhythm of your life without removing you from it.

Why Nobody Built This Yet

The answer is not technical. It is architectural. Every wearable lives in its own ecosystem. Whoop talks to Whoop. Oura talks to Oura. Apple Watch talks to Apple Health. The data exists in silos designed for dashboards, not for environmental coordination. No wearable manufacturer has an incentive to let your biometric data flow to your thermostat, because your thermostat belongs to a different company's ecosystem.

This is the same walled-garden problem I wrote about in the IoT piece, but applied to your body instead of your speaker. The industry forces you to choose: you can have great biometric data or you can have a connected home, but the two will not talk to each other because nobody built the coordination layer between them.

And this gap is widening, not closing. Every year wearables generate richer, more granular, more real-time data. Every year that data hits the same ecosystem wall. The sensors improve. The coordination does not. Which means the frustration of your body broadcasting while your home ignores it gets worse with every product cycle, not better. The companies making the sensors and the companies making the devices have no shared incentive to solve this. If anything, they have the opposite incentive: keep the data inside their dashboard, keep the user inside their app, keep the subscription inside their revenue model. The gap is not an oversight. It is a feature of how the industry makes money.

That is the gap Lifestyle AI is designed to close. Not by owning the sensors or the devices, but by coordinating between them. An open coordination platform does not care whether the data comes from a Whoop or an Oura or an Apple Watch. It does not care whether the output goes to Hue lights or a Nest thermostat or a Sonos speaker. It cares that you expressed a preference, whether you expressed it through a voice command, a gesture, a brain-computer interface, or the continuous broadcast of your own biology, and that the environment responded.

The Ethical Dimension Nobody Wants to Talk About

I need to address this directly because biometric data in the home is intimate. If your heart rate variability is shaping your lighting, who decides what that means? If your glucose data is adjusting your kitchen, who owns the pattern? If your sleep staging is changing your morning, who has access to the fact that you slept badly?

These are not hypothetical questions. They are the reason this frontier has remained mostly theoretical. The companies with the sensor data do not trust the companies with the home devices. The companies with the home devices do not want the liability of acting on biometric signals. And nobody wants to be the first to explain to a regulator why a thermostat knows your heart rate.

I do not have a complete answer to this, and I distrust anyone who claims to. But I do have a design principle: the user constructs the experience. Not the platform. Not the device manufacturer. Not the wearable company. You decide what signals your environment listens to, what actions it takes, and what stays private. Vibecoding for your lifestyle means you set the rules. The platform coordinates within them.

That is not a privacy policy. It is an architecture. And the architecture has to be built with this question at the center, not bolted on at the end.

What This Means for Everyone

Biohacking sounds niche. I get it. The word conjures images of people with NFC chips in their hands and nootropic stacks on their counters. And some of that world is exactly as niche as it sounds.

But the trajectory is not. An Apple Watch already does basic health monitoring for more than 170 million people. Continuous glucose monitors are moving toward consumer adoption. Sleep tracking is in nearly every fitness wearable on the market. The volume of biometric data available to the average person is growing every year, and it is growing faster than the infrastructure that would let it do anything useful beyond a dashboard.

The biohackers are just the early adopters. The question they are asking, "what should my environment do with what my body knows," is a question every person with a smartwatch will be asking within five years. The infrastructure to answer it needs to exist before they ask.

And that infrastructure will not come from the wearable companies or the device companies. It cannot. They each own one side of the equation and have no business reason to bridge to the other. The coordination layer has to sit between them, belong to neither, and serve the person wearing the sensor and living in the room. That is the architectural bet Lifestyle AI makes.

That is what Tethral is building toward. Not biohacking as a product category. Biohacking as a leading indicator of where all connected experience is headed: environments that respond to who you are, not just what you say.

This is part of an ongoing series on the foundational design principles behind Lifestyle AI. Next: why accessibility, biohacking, and BCI are not edge cases. They are the frontier of normal.

RELATED POSTS

.jpg)