.jpeg)

What We Do Not Know About Agent Interaction Should Concern Us More Than It Does

John Lunsford, Founder and CEO of Tethral

Here is a strange thing about the most sophisticated AI systems in the world. They are very good at appearing to work. An agent can navigate a complex task, touch a dozen systems, produce a clean result, and deliver it in a tone that suggests everything went smoothly. And you have almost no way of knowing whether that is true.

You do not know how many times it retried before it succeeded. You do not know how many tokens were consumed by the agent arguing with a system that did not respond the way it expected. You do not know whether the latency you experienced was processing time or friction time, whether the system was thinking or fighting. You do not know which of the twelve systems it touched responded cleanly and which ones required the agent to adapt, reformulate, and retry in ways that cost compute, time, and stability without leaving a trace in the output.

The output looks fine. The work underneath is invisible.

That invisibility is not a minor technical detail. It is the central unsolved problem of agentic infrastructure. And the more capable agents become, the worse it gets, because a more sophisticated agent is better at hiding the friction it encountered. A smarter agent does not produce less friction. It produces friction you cannot see.

The Sophistication Problem

This is counterintuitive, so I want to be precise about it.

A simple automation fails visibly. It hits an error and stops. It throws an exception. It produces a log entry that says: this broke, here is where, here is why. You can see the failure. You can fix it.

An intelligent agent does not do that. An intelligent agent encounters a failure and adapts. It retries with a different approach. It reformulates the request. It finds an alternative path. It recovers. And the result it delivers looks identical to the result it would have delivered if nothing had gone wrong. The recovery is invisible to the user. It is also invisible to most monitoring systems, which track output quality and response time but not the internal cost of getting there.

This means the better your agent gets, the less you know about what it is actually doing. The sophistication that makes the agent useful is the same sophistication that makes its behavior opaque. And that opacity has costs that accumulate in places nobody is watching.

Token consumption three, five, ten times higher than projected because the agent is spending tokens on recovery, not work. Latency attributed to processing when it is actually friction with a downstream system. Stability risks invisible because the agent routed around a degrading system instead of reporting that the system was degrading. Every one of these is happening right now in production agentic systems. The teams running them do not know it because the output looks fine.

The output always looks fine. That is the problem.

This Is Not an Observability Problem

The industry's answer is better monitoring. More tracing. More logging. More dashboards. Tools like LangSmith, Helicone, and OpenTelemetry that watch every step an agent takes and measure every token it consumes. Those tools are good at what they do. They are also looking in the wrong place.

They watch what happens inside the agent. I am talking about what happens between the agent and the system it touched.

Not the agent's internal reasoning. The collision. The moment of contact between two systems with different assumptions, different timing, different protocols, different failure modes. That collision produces cost, latency, and instability that do not live inside either system. They live in the space between them. No amount of tracing the agent's internal logic will make that space visible, because the friction is not a property of the agent. It is a property of the interaction.

And the problem compounds when the systems the agent is touching are not modern APIs designed for agentic workflows. They are legacy systems. Payment processors built for human-speed transactions. Building management systems running protocols from the last decade. Medical devices with firmware that predates the concept of an AI agent. The friction an agent produces when it hits a contemporary system is real. The friction it produces when it hits a legacy system is an order of magnitude worse and an order of magnitude less visible, because legacy systems were never instrumented to report what happened at their boundary. They just responded. Or they did not. And the agent adapted either way, invisibly.

What Happens at the Boundaries

I have spent the past year on a specific question: what actually happens when machines interact?

Not what we designed to happen. Not what the architecture diagram says should happen. What actually happens at the boundary between an agent and a system when real data moves in real time across real infrastructure. The handshake. The negotiation. The moment where two systems built by different teams, for different purposes, on different assumptions, encounter each other and have to figure out how to communicate.

And here is something I have come to believe after a year of measuring this space: the negotiation that matters most happens before the systems even authenticate. Before credentials are exchanged, before protocols are agreed upon, before semantics enter the picture, there is a pre-semantic interaction where the systems are reading each other's behavior, timing, and response patterns to determine whether communication is even viable. That layer is almost entirely unexamined. And it is where the largest friction costs originate.

That boundary is where the cost lives. Tokens consumed by negotiation rather than work. Latency from mismatched expectations between systems. Stability degrading because one system's assumptions about timing, format, or response structure do not match the other's. And the friction compounds, interaction by interaction, into a systemic cost nobody budgeted for because nobody can see it.

In software, this boundary is already expensive and opaque. The problem gets fundamentally harder when you cross from digital into physical.

Where Digital Meets Physical

Most agentic AI lives on a screen. Agent talks to API. API responds. Both sides speak similar protocols. The failure modes are well understood.

Now put that agent in a room.

An agent coordinating a smart lock, a thermostat, a lighting system, and a security camera is no longer operating in a controlled digital environment. The smart lock has its own firmware, its own latency profile, its own failure modes that have nothing to do with the agent's expectations. The thermostat responds on a different timescale than the lighting system. The camera is bandwidth-constrained in ways the calendar API never was.

The agent navigates all of this simultaneously, absorbing the friction, delivering a result that looks seamless. You said "winding down" and the room responded. It felt effortless. But between your words and the room's response was a negotiation between five systems across two ecosystems with three different communication protocols. You have no idea what that negotiation cost or whether it is degrading over time.

Here is what degradation looks like when nobody is watching. I lost an uncle to a coordination failure in a hospital. Not a misdiagnosis. A misprioritization in the orchestration of events that should have produced attention and did not. Every individual system in that hospital was functioning. The interaction between them is where the failure lived. And nobody was watching that space.

That is not a performance metric. That is a life.

What I Found When I Started Looking

A few teams in the industry have started noticing pieces of this. Galileo's benchmarks surface the token cost of retry loops. Agents Arcade named the "reasoning tax" for compute burned on dead ends. DataRobot proposed "success-per-dollar" as a metric. These are pieces of a picture none of them have assembled.

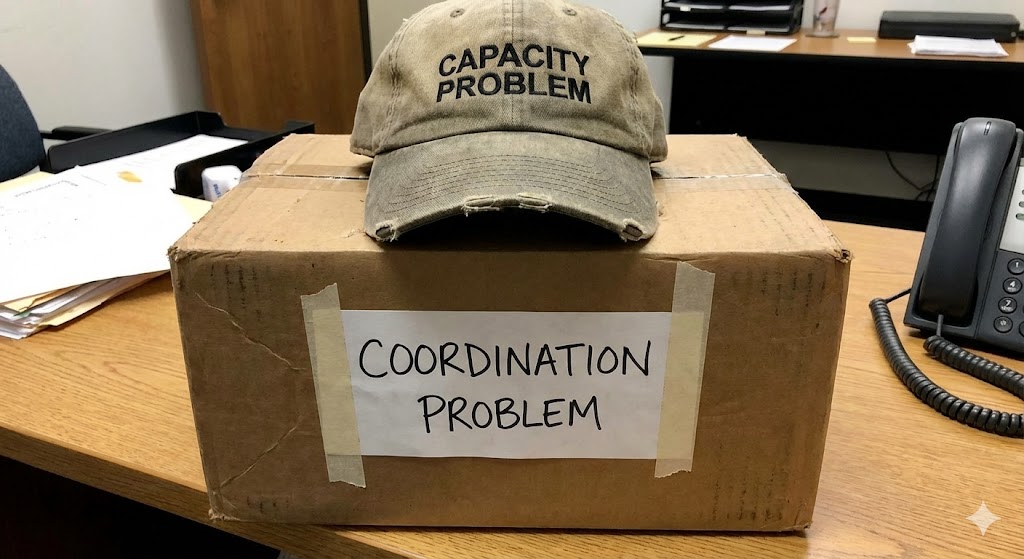

What I found when I started building measurement infrastructure for interaction friction was something none of those frameworks describe. In our testing across multi-system coordination tasks, between a quarter and a third of total compute consumption was friction. Not the agent reasoning. Not the agent executing. The agent negotiating with systems that did not behave the way it expected. And the friction was driven primarily by conditions on the sender side, the agent's approach to the boundary, not the receiving system's response.

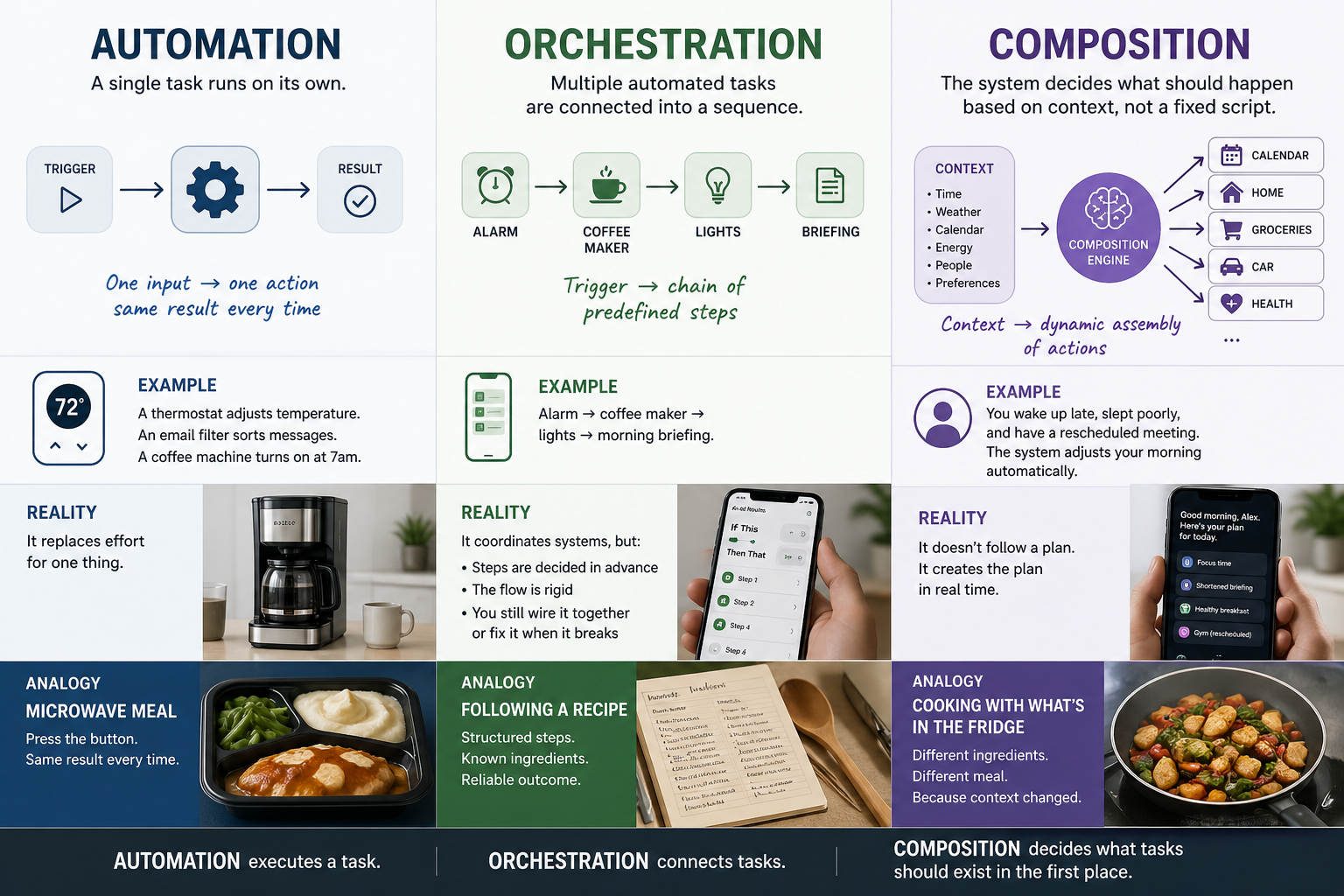

That was not what I anticipated. And it changes how you think about every solution the industry is currently pursuing. If friction is sender-generated, then faster systems on the receiving end do not solve the problem. More capacity does not solve it. The fix is at the coordination layer, in how the agent approaches the boundary in the first place.

This is where Tethral lives. Not in the output. In the space between the systems that produce it.

When devices interact with agents. When agents interact with each other. When digital systems cross into physical spaces and hit the messy, latent, unpredictable reality of hardware that was never designed for agentic workflows. When system personalities collide, and by personalities I mean the behavioral signatures of systems built with different assumptions about timing, format, authority, and failure. That collision space is where the actual work of coordination happens. It is almost entirely unobserved. And we are building the instruments to observe it.

It is like asking why a hospital invests in coordination between departments instead of hiring better doctors. The doctors are excellent. The problem is that the handoff between the ER and radiology loses information, adds latency, and produces errors neither department can see because each one's metrics look fine. The quality of the individual components is not the bottleneck. The interaction between them is.

What This Means

The agentic AI industry is building increasingly capable agents on top of increasingly unobserved interactions. The agents get smarter. The interactions get more opaque. The gap between what the output suggests and what actually happened underneath gets wider with every generation of model improvement.

That gap is not sustainable. Not economically, because the hidden friction costs are compounding. Not operationally, because degradation you cannot see is degradation you cannot fix. And not safely, because as these systems move into physical spaces, into homes, into rooms where people live, the consequences of unobserved interaction failure stop being dashboard metrics and start being outcomes.

Most people's lives are framed by these boundaries. Work is digital. Home is physical. Health is one app. Calendar is another. Smart home is a third ecosystem that talks to neither. The phone is the bridge, and the person is the middleware, manually carrying context between systems because no coordination layer exists to do it for them.

We are building that layer. You cannot compose a seamless experience across systems if you do not know where the seams are, what they cost, and how they behave under load. The seamless experience is the product. The friction measurement is what makes it possible.

The output looks fine. The question is what it cost to make it look that way, and whether that cost is one we can keep paying.

This is part of an ongoing series on the foundational design principles behind Lifestyle AI.

RELATED POSTS

.jpg)