.jpeg)

A Coordination Problem Wearing the Costume of a Capacity Problem

The agentic economy has an infrastructure problem that almost everyone is misdiagnosing.

The diagnosis goes like this: AI agents generate enormous compute demand. The supply of GPUs, memory, power, and data center capacity is not keeping pace. Therefore the bottleneck is capacity, and the solution is to build more. More data centers, more chips, more gigawatts. The numbers are staggering but the logic is linear. Demand exceeds supply. Increase supply. Problem solved.

This diagnosis is wrong, though not because the capacity constraints are imaginary. They are real and severe. It is wrong because it mistakes a symptom for the disease. The actual problem is that a large and growing fraction of the compute agents consume produces nothing. Not failed transactions, which would at least be visible and countable, but invisible overhead. Coordination waste. The cost of agents interfering with each other in ways that nobody designed and nobody measures.

The agentic economy will not stall because we cannot build enough infrastructure. It will stall because we are burning the infrastructure we build.

The steam engine had this problem first

In the early decades of industrial steam power, factories wasted between 80 and 95 percent of the energy their boilers produced. The engines worked. The fuel was available. The problem was not capacity but the absence of any coordination mechanism between the energy source and the work being performed. Steam escaped through leaks, built up in cylinders that were not ready for it, drove pistons at the wrong moments, and dissipated as heat into factory floors. The energy was there. It was just destroying itself on the way to being useful.

The solution was not bigger boilers. It was the governor: a device that read the behavioral state of the engine, its speed, its load, its rhythm, and adjusted steam flow before waste occurred. James Watt's flyball governor did not add energy to the system. It prevented the system from destroying its own output.

Agentic AI is in its pre-governor era.

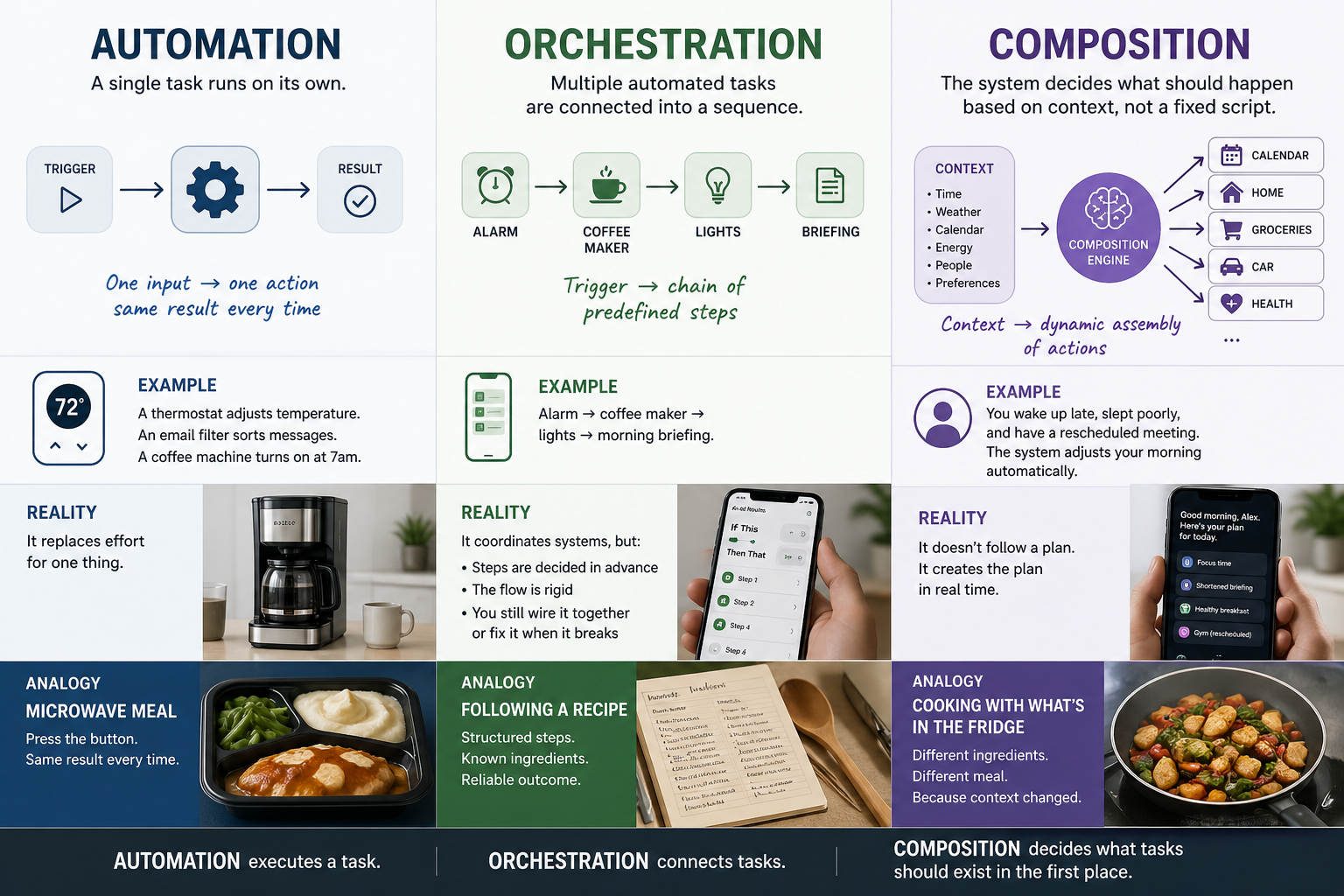

Agents are deployed into production environments that were designed for request-response workloads, and when their interactions generate waste, the industry's response is to add capacity. The waste patterns are specific and well-documented, even if their aggregate cost is not, and they share a common structure: agents acting reasonably in isolation produce unreasonable outcomes in combination.

Consider retry behavior. When an agent receives a transient error and retries, it does so at the same moment that dozens of other agents retry the same endpoint, because nothing coordinates the timing of their recovery attempts. The result is a retry spiral in which the original transient failure becomes a sustained one, amplified by the very mechanism designed to recover from it. A single failed request becomes a hundred, and the capacity consumed by those hundred retries produces zero value.

Or consider what happens when a high-level intent fans out to sub-tasks, and each sub-task fans out further. A purchase intent becomes product queries, price comparisons, review lookups, inventory checks, fraud assessments, and payment authorizations, each of which triggers its own downstream processing. The fanout is exponential against a linear intent, and most of the resulting compute is redundant, duplicative, or directed at services already saturated by other agents doing exactly the same thing.

These patterns compound. Agents from different tenants competing for the same processing queues create artificial bottlenecks that ripple outward, forcing overprovisioning in systems that were not themselves under strain. A failure in one service propagates through agent workflows that span multiple services, so a localized issue becomes systemic before anyone identifies the origin. And compute provisioned for peak agentic demand sits idle between bursts, burning power and capital for capacity that produces nothing, because the peaks are unpredictable and the valleys are invisible to the billing system.

Every one of these patterns converts infrastructure investment into waste. And the industry's dominant response, overprovisioning, treats that waste as an acceptable cost of doing business. Provision for the worst case. Add headroom. Deploy more queues.

At small scale, this is viable. At the scale the agentic economy is approaching, it is not.

The scale the agentic economy is approaching

McKinsey projects that agentic commerce will mediate $3 to $5 trillion in global retail revenue by 2030, a figure that covers goods only, excludes services, and does not account for the B2B market where Gartner separately forecasts $15 trillion flowing through agent-mediated exchanges by 2028. Adobe has documented a 4,700% year-over-year increase in AI-generated traffic to U.S. retail sites. The inference market is projected to more than double by 2030, from $106 billion to $255 billion. AI data center capital expenditure reached $400 to $450 billion in 2026 and is projected to hit $1 trillion by 2028. Power demand for AI data centers hit 21 gigawatts in 2025; RAND projects 327 gigawatts by 2030, which is more than most nations consume.

Bain calculates that meeting this projected demand requires $500 billion in annual capital expenditure, corresponding to roughly $2 trillion in annual revenue to sustain that spending. That revenue does not exist yet.

These numbers describe the capacity challenge, and the capacity challenge is real. But they assume that agents are efficient consumers of the compute they are allocated, and the coordination waste patterns described above mean they are not. At current levels of agentic traffic, the waste is absorbed by headroom. At the levels these projections contemplate, the waste exceeds the headroom that can be provisioned at any price.

The binding constraint on the agentic economy is not how much compute we can build. It is how much of the compute we build produces value versus how much is consumed by agents interfering with each other.

Why overprovisioning breaks

Overprovisioning works when the waste is a fixed percentage of useful work. If agents waste 20% of allocated compute on coordination failures, you provision 25% above projected demand and absorb the loss. Expensive, but tractable.

The trouble is that agent coordination waste is not a fixed percentage. It is a function of agent density, and it moves in the wrong direction. As more agents operate in shared environments, the probability of correlated behavior increases: more agents retrying the same endpoints at the same moment, more agents fanning out to the same downstream services simultaneously, more tenants competing for the same processing queues. The waste fraction is not 20%. It is a variable that grows with the system it is embedded in.

What this means in practice is that as the agentic economy scales, the ratio of waste to useful work does not hold steady. It degrades. The more agents you deploy, the more compute each agent wastes, because the density of uncoordinated interactions increases faster than the capacity to absorb them. The infrastructure gap is not static. It is accelerating.

Somewhere between the early adoption phase, where existing infrastructure absorbs the load comfortably, and the mature phase, where McKinsey's trillions circulate, the waste fraction crosses a threshold where overprovisioning cannot keep pace. Not because the capital is unavailable, but because the headroom required grows faster than the capacity that can be built. That threshold is the wall the agentic economy will hit if the current infrastructure trajectory holds, and right now, there is very little reason to believe it will not.

What a governor looks like

Closing the gap requires infrastructure that operates before the waste occurs. Not monitoring that detects failures after they have already propagated, and not circuit breakers that trip after the damage has compounded, but a coordination layer that reads behavioral signals and intervenes before correlated agent actions become correlated agent failures.

The behavioral signals are specific. Timing patterns that indicate synchronized retry behavior. Frequency signatures that reveal fanout amplification in its early stages. Sequence anomalies that predict queue saturation before it arrives. Load shapes that distinguish organic demand from cascading interference. These signals are readable without inspecting the content of what agents are doing, because they are structural, not semantic. They exist in the rhythm and shape of agent behavior, not in the meaning of agent requests.

A coordination layer that reads these signals can do what Watt's governor did for steam: regulate flow before waste occurs. It can hold an agent's request for 200 milliseconds to desynchronize it from a developing retry storm, or shape fanout by deferring lower-priority sub-tasks when downstream services show early signs of saturation. It can route around emerging contention before it becomes a bottleneck, and contain blast radius by recognizing propagation patterns before they reach critical mass. None of these interventions require understanding what the agent is trying to accomplish. They require understanding how the agent is behaving in relation to other agents. The coordination is pre-semantic. It operates on behavioral geometry, not content.

This is not a feature to be added to existing infrastructure. It is a missing layer. And the difference between an agentic economy that reaches the scale McKinsey projects and one that stalls at a fraction of that number may come down to whether this layer gets built before the waste ratio makes scaling impossible.

The deeper question is why the industry keeps reaching for capacity when the problem is coordination. I think the answer is that we have not yet reckoned with what agents actually are, and until we do, we will keep building infrastructure for the wrong kind of demand. We will keep sizing boilers when we should be building governors.

The capacity problem is real, and it is the visible problem, and it is attracting the visible investment. The coordination problem is the one that determines whether that investment produces an agentic economy or a very expensive bonfire.

RELATED POSTS

.jpg)