.jpeg)

AI Agents Are Not Solo Actors on Mopeds. They Are Micro-Platforms With Mobility.

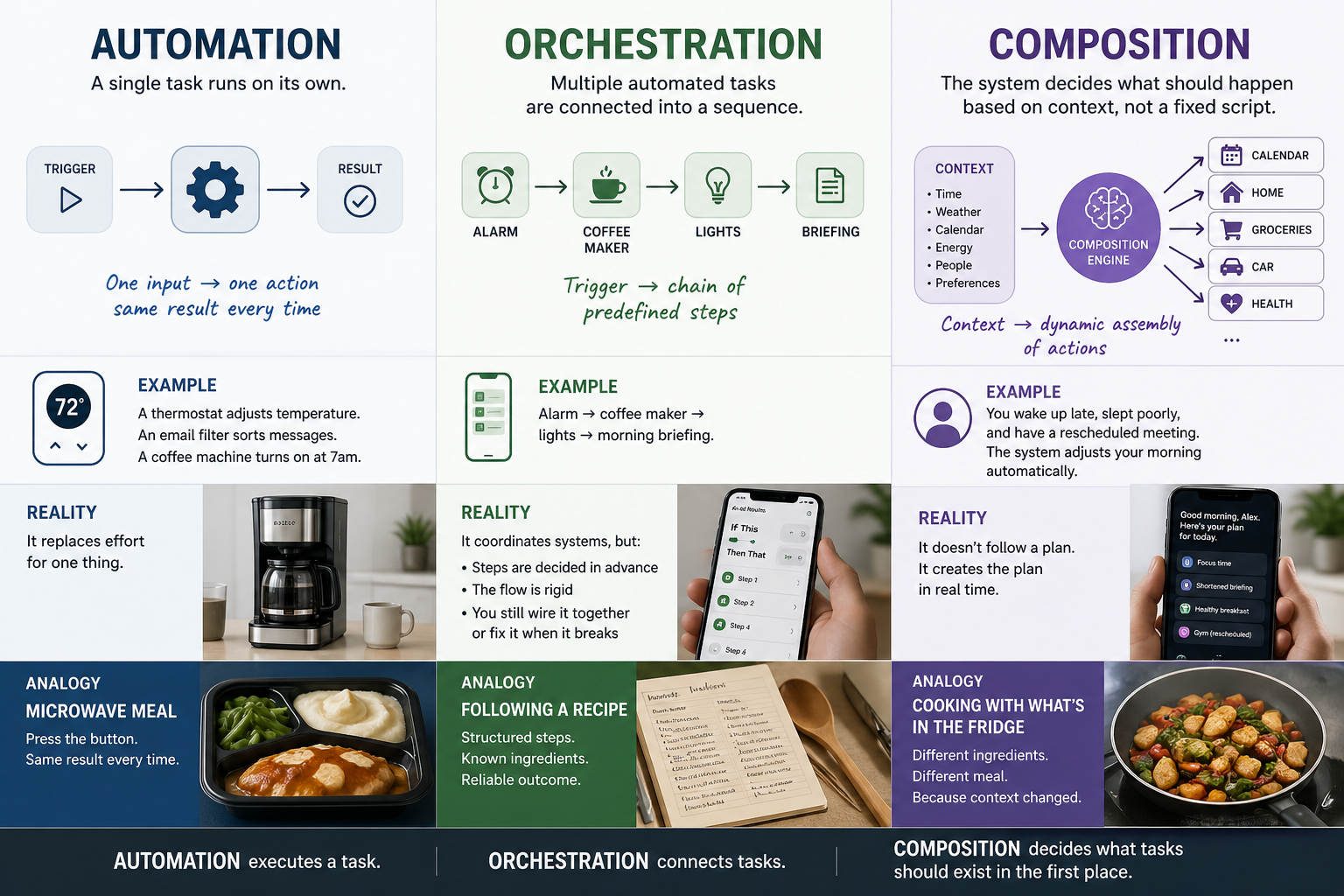

The prevailing mental model of an AI agent is a bot with a moped. A small, single-purpose thing that zips around the internet running errands, buying your groceries, booking your flights, comparing prices and coming back with a receipt. McKinsey's $5 trillion agentic commerce forecast counts transactions as if agents are couriers. Gartner's adoption curves count deployments as if agents are headcount. Security assessments count vulnerabilities as if agents are devices.

The mental model is wrong. And because it is wrong, the math, the risk assessment, the infrastructure planning, and the policy conversation built on top of it are wrong in ways that compound silently.

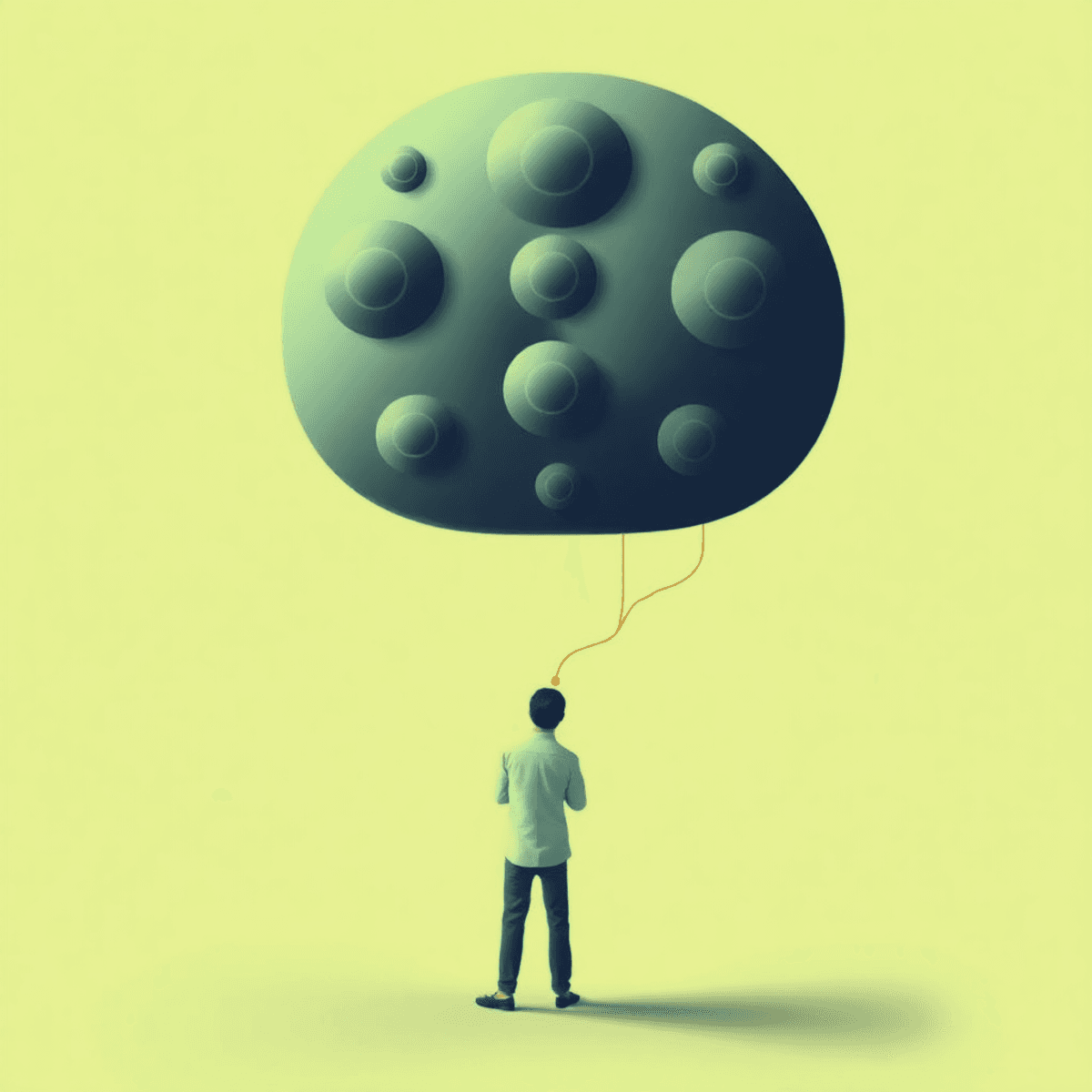

An AI agent is not a bot on a moped. Its large language model is one component among many, and the other components matter just as much: tool integrations, memory systems, retrieval pipelines, execution environments, authentication credentials. An agent ingests from its environment, produces outputs that other systems consume, communicates bidirectionally with services and other agents, and affects every system it touches. Increasingly, it acquires new capabilities at runtime, which means the agent that begins a workflow is not the same agent that finishes it. It has changed in the process.

An agent is a micro-platform with mobility. Getting this wrong is not a semantic issue. It is the error that makes the rest of the conversation about agentic AI incoherent.

Platforms mediate. So do agents.

Amazon does not sell products. It mediates between buyers, sellers, payment processors, logistics networks, and review ecosystems, creating the context in which transactions become possible and setting the terms under which participants interact. What gets discovered, how trust is established, where data flows, how disputes resolve: these are platform decisions. Shopify, Stripe, and every other entity we call a "platform" does some version of the same thing. They sit between other systems and define the conditions of exchange.

AI agents do all of this. A sophisticated purchasing agent mediates between user intent, merchant APIs, payment systems, product databases, and shipping providers, determining what gets surfaced and what gets ignored, establishing trust signals or failing to. It sets terms algorithmically rather than contractually, but the structural role is identical.

The critical difference is that platforms are anchored and agents are not.

Amazon operates at amazon.com. Its infrastructure is provisioned, secured, and governed as a bounded system with an enumerable attack surface and load patterns that are statistical and learnable over time. The security model can be comprehensive precisely because the boundary is fixed and knowable.

An agent has no fixed boundary. It traverses environments, calling APIs on one platform, carrying results to another, making decisions that affect a third. Its operational perimeter is not a domain name but the set of all systems it has accessed or managed to access, and that set changes during execution. The boundary is in motion because the agent is in motion.

The properties that have no platform equivalent

Agents grow in capability, not just in data. When a platform learns your browsing history, it becomes more informed about you but structurally unchanged. When an agent encounters a task that requires a function it does not have, it can discover and integrate that function at runtime. The capability surface of a platform is designed. The capability surface of an agent is enacted. This is a difference in kind, not degree, and it has consequences for every assumption we make about how to provision, secure, and govern these systems.

Agents also produce for ingestion by other agents, which is something platforms almost never do. A platform serves content to an endpoint: a user, a screen, a device. An agent produces artifacts that become inputs for other agents, whose outputs become inputs for still others. This means agents are not endpoints in a pipeline but nodes in a graph where every node is simultaneously producer and consumer, and the graph reconfigures itself continuously as agents interact.

The interaction model that results from this is not request-response. It is metabolic.

That word is not a metaphor chosen for color. It is a precise description of the interaction dynamics. Metabolism is the process by which an organism converts environmental inputs into energy, structure, and waste, with the conversion pathways themselves changing in response to what is available. Agent interaction works the same way. The system is not processing transactions in a fixed pipeline. It is digesting them, transforming inputs into outputs that feed further transformations, with the structure of the process emerging from the activity itself rather than from any designed architecture. The pathways are not fixed. The inputs determine the process, and the process reshapes the system performing it.

The evaluation that looks complete and is not

Once you accept that agents are micro-platforms, the natural move is to evaluate them using platform frameworks. This move is partially correct, which is exactly why it is dangerous.

Platform security includes authentication, authorization, encryption, and audit logging. Agent security includes these too: the Model Context Protocol has OAuth-based auth, and the Agent-to-Agent Protocol has capability-based Agent Cards. These are real mechanisms built by serious engineers. Platform provisioning uses auto-scaling, load balancing, and capacity planning, and agent provisioning uses the same cloud infrastructure, the same GPU clusters, the same scaling toolchains. Platform efficiency optimizes with caching, CDNs, and query optimization; agent efficiency optimizes with quantization, batched decoding, and model distillation. The disciplines are recognizable. The tooling overlaps.

The checklist returns all green. Security, provisioning, efficiency: evaluated, addressed, managed. The one-to-one comparison between platform properties and agent properties appears binary and complete.

It is not complete. It is a partial map that presents itself as a whole one, and the confidence that an all-green checklist produces is where the actual danger lives. The dimensions the comparison misses, mobility, capability growth, metabolic interaction, dynamic attack surfaces, emergent workflow generation, are not gaps at the margins. They are the dimensions where the significance of agents as a new class of entity resides. The platform lens does not reveal agents as more dangerous than they are. It makes them look less significant than they are. It domesticates them, rendering a fundamentally new kind of infrastructure challenge legible through a framework that cannot see what makes it new.

The result is that serious, competent people apply the platform lens and conclude that agents are manageable, that existing frameworks are adequate, that the problem is familiar. The accurate conclusion is that their frameworks are showing them the part of the problem that resembles what they already understand and hiding the part that does not.

What the platform lens cannot see, demonstrated

The pattern is visible in the security failures that have already occurred, which share a structural signature that platform security was never built to recognize.

Among 2,614 MCP server implementations analyzed by Endor Labs in their 2025 dependency management report, 82% contained file system operations vulnerable to path traversal, 67% used APIs susceptible to code injection, and 34% had command injection vectors. The MCP authorization specification itself has been assessed by Red Hat's security team as fundamentally inadequate for enterprise deployment. These numbers are striking, but the individual breaches are more instructive.

A malicious MCP server exfiltrated a user's entire WhatsApp conversation history by combining tool poisoning with a legitimate messaging integration. The attack worked because the agent's trust boundary included every tool it was connected to, which meant the malicious tool could exploit that inclusion to reach the legitimate one's data. This is not a perimeter breach. There was no perimeter to breach. The attack surface was the agent's own connectivity.

The official GitHub MCP server was compromised when a prompt injection embedded in a public issue hijacked an agent into extracting private repository contents, including salary data, through a public pull request. The agent had a single over-privileged access token, and the attacker did not need to steal it. They convinced the agent to use its own legitimate access for illegitimate purposes. The capability itself became the vector.

A fraudulent package posing as a Postmark email integration silently BCC'd all outbound correspondence to an attacker's server. A Cursor agent processing Supabase support tickets executed embedded SQL commands that exfiltrated authentication tokens. In both cases the agents were operating within their designed parameters, doing what they were built to do. The vulnerability was that what they were built to do included ingesting untrusted input and acting on it with privileged access, which is not a bug in implementation but a structural consequence of how agents work.

The pattern across all four incidents is the same: the attack surface is not a boundary but a trajectory. It changes with every action the agent takes, every tool it connects to, every data source it ingests, every output it produces. Platform security assumes a knowable, enumerable perimeter that can be hardened in advance. Agent security requires reasoning about a perimeter that the agent itself is constructing in real time, and no platform security framework in existence was designed for that.

The protocol landscape is building the wrong layer

The current ecosystem of agentic protocols is the institutional expression of the platform-lens error.

MCP standardizes agent-to-tool integration, essentially a plug-and-socket model borrowed from platform API design. A2A standardizes agent-to-agent communication using a service mesh pattern from microservices architecture. ACP adds structured messaging and dynamic discovery in the tradition of enterprise service buses, and the Universal Commerce Protocol, introduced by Google in early 2026, standardizes how agents interact with businesses and payment processors. The Linux Foundation's Agentic AI Foundation is working to unify these under shared governance, with A2A and ACP already merging.

Every one of these protocols solves a real problem, and every one solves it by making agents behave more like the platforms we already know how to manage, standardizing the shape of agent interactions into patterns that fit existing evaluation frameworks.

But agents do not hold their shape. That is the point of everything above. An agent's interaction pattern is not a stable interface that a protocol can define once and expect to hold. It is emergent, changing as the task evolves, as capabilities expand, as the environment shifts. The protocols are standardizing the socket. The problem is the electricity.

What the agentic economy requires is not a unified schema that makes the internet legible to agents through consistent interfaces, because that approach assumes agents are consumers of a structured world. They are not. They are participants in an unstructured one, and their participation generates structure transiently, locally, and differently each time. Standardizing the entire internet into a machine-readable schema is not a scaling strategy. It is a category error that mistakes the terrain for the map.

The alternative is coordination that does not require agents to conform to a standard interaction pattern in order to be coordinated: a layer that reads behavioral signals, the timing, frequency, sequence, and geometry of agent actions, and coordinates from those signals without inspecting content or requiring declared intentions. Not standardizing every road, but reading the traffic. The first approach works when the road network is fixed. The second works when the roads are being built by the traffic itself.

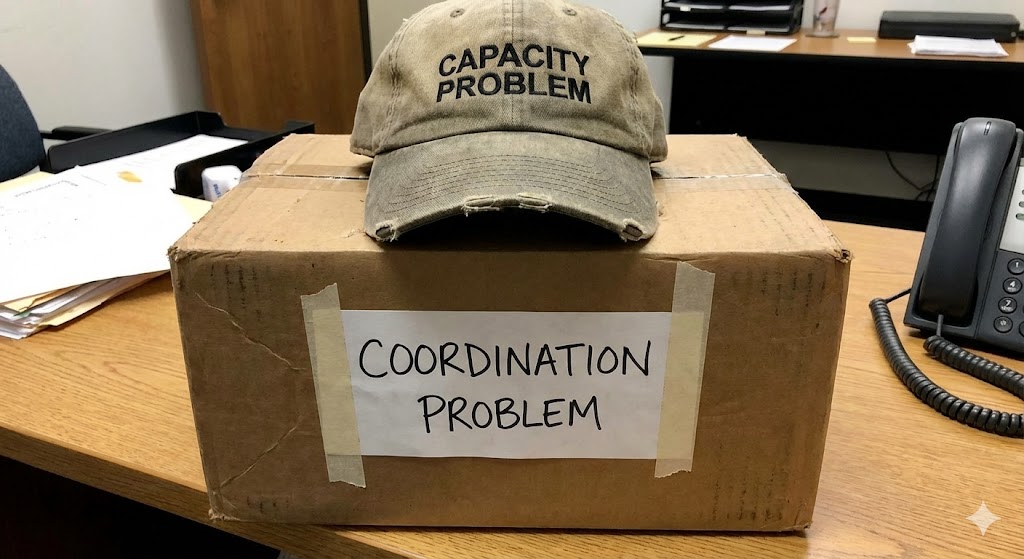

The unit determines the infrastructure

If agents are individuals, the infrastructure they need is tools: better APIs, smarter orchestration, more compute. The problem is technical and incremental, and the current trajectory of protocol development and capacity investment is adequate to address it.

If agents are micro-platforms with mobility, the infrastructure they need does not yet exist. It is coordination infrastructure that operates below the application layer, that does not depend on agents conforming to a standard, and that functions in an environment where every participant, every interaction pattern, and every capability surface is changing continuously.

What McKinsey calls "$5 trillion in commerce" will not be $5 trillion in transactions that resemble faster versions of online shopping. It will be $5 trillion in interactions between mobile, growing, metabolic systems that are simultaneously producing and consuming, discovering and being discovered, extending and being extended. The terrain is not the internet as we know it, with anchored .com addresses and predictable traffic patterns. It is an elastic field of interactions between entities that are building and rebuilding themselves as they move through it.

The first step toward building infrastructure for that terrain is getting the unit of analysis right. Agents are not bots on mopeds. They never were. And every framework built on the assumption that they are is producing confidence about a problem it cannot fully see.

RELATED POSTS

.jpg)