.jpeg)

I Controlled My Home With My Brainwaves Through Tethral. This Is What It Looks Like When AI Leaves the Screen.

Do not get me wrong. I love what OpenAI, Anthropic, and the broader AI community are doing. The progress in reasoning, language, multimodal understanding, and tool use over the past two years has been extraordinary. I use these tools every day. I build with them. I am grateful for what they have made possible.

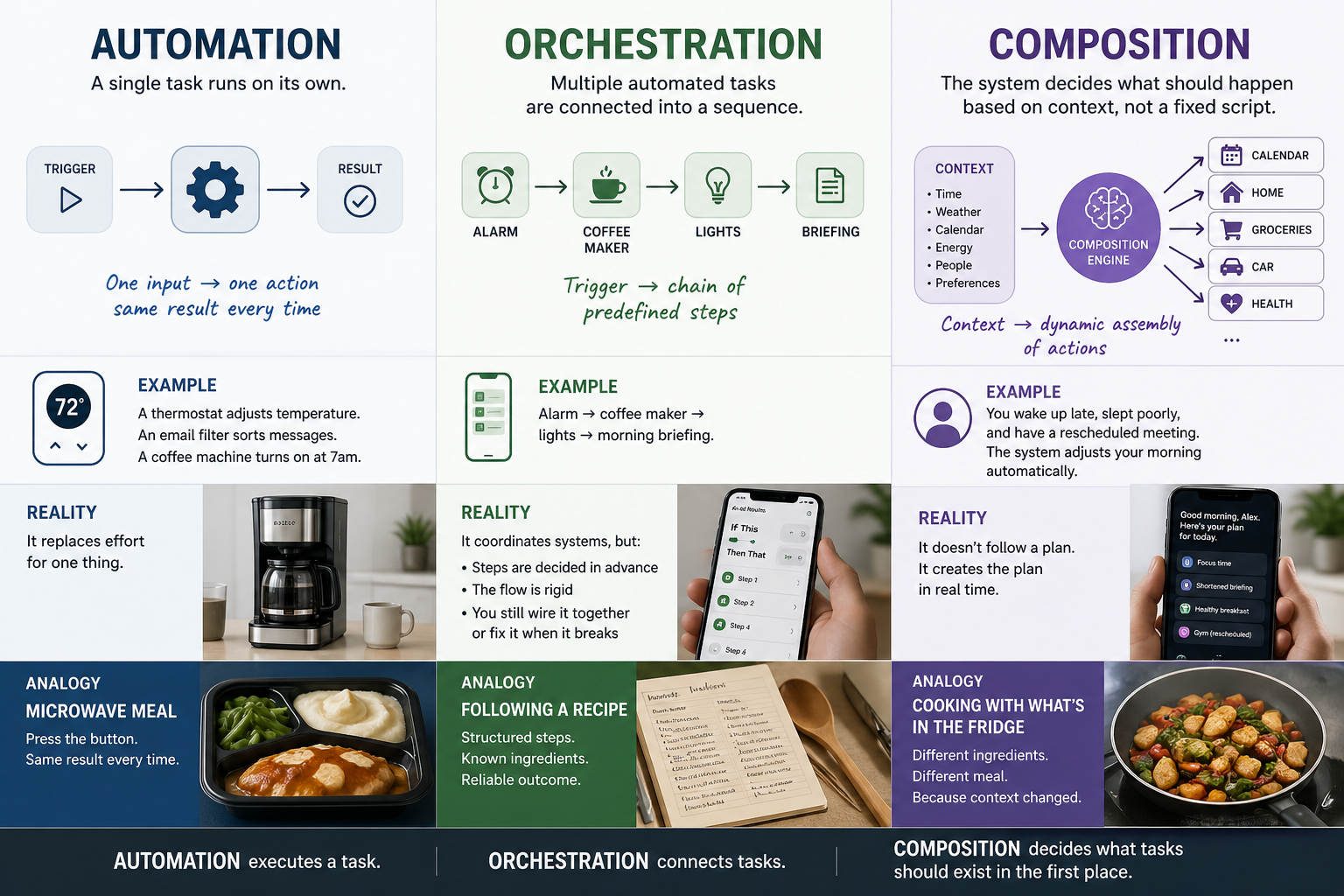

But most of that progress lives on a screen. You open a chat window. You type a prompt. You read a response. Even the most advanced AI in the world, right now, mostly meets you in a text box. And the companies beginning to push beyond that, OpenAI entering the connected hardware space, others exploring voice and ambient interaction, are taking the first steps toward something I believe matters more than any model improvement: getting AI into the physical spaces where people actually live.

I have been writing recently about what AI-native experiences require, from the philosophical question of how humans relate to processes they cannot see, to the design challenge of measuring something that works by disappearing, to what happens when AI itself is the user encountering systems designed for humans. This article is about the next layer of that question. What does it look like when the interface is not a screen, not an app, and not even a voice command, but something closer to the way you already experience the world?

The BCI Was Real. It Was Also Limited. That Is the Point.

Last week I sat in a chair with a Muse S Athena brain-computer interface on my head and controlled devices in my home through Tethral. I dimmed lights. I shifted colors. I summoned a robot to deliver water. With a thought.

I want to be honest about what this was and what it was not. Consumer BCIs, including the Muse S Athena, are an amazing piece of technology, but they have a long way to go before they can fully control the dimensions of connected devices and address needs as they arise through biological conditions, not just when you think to say something about them. The signal is noisy. The vocabulary of commands is small. The calibration takes patience.

But that is the point. Even in its early, limited form, a brain-computer interface connected to a coordination platform did something important: it demonstrated that the interaction between a person and their environment does not have to be mediated by a screen, an app, or even a spoken word. I expressed intent, and the environment responded. No menu. No skill list. No cylinder on my counter asking me to repeat myself.

The technology is early. The implications are not.

AI Deserves to Be More Than a Chat Window

Here is what I think the industry has not fully reckoned with. We built the most powerful reasoning systems in history and then confined them to the same interaction model as a customer service chatbot. A text box. A prompt. A response. That is where AI lives for most people, and for most people, that is where it stays.

Voice started to change that. Voice assistants brought AI out of the text box and into the room. That was a meaningful step. You could talk to your house. Your house could respond. For millions of people, that was the first time AI felt like it existed in the physical world rather than behind a screen.

But voice is one modality. And the connected technology industry, for reasons that have more to do with business models than human needs, built entire ecosystems around it as though it were the only one. The result is that "connected" came to mean "voice-activated," and the possibilities narrowed to what you could accomplish by talking to a speaker.

The real frontier is not any single input method. It is the transition from AI as something you visit on a screen to AI as something that coordinates the environment around you, regardless of how you choose to interact with it. Voice. Gesture. Proximity. Biometric signals. Context. Schedule. And yes, eventually, thought.

That is what the BCI demonstrated for me, not that brain control is ready to replace anything, but that the interaction surface for AI in physical spaces is far wider than the industry has been willing to build for.

The Smallness I Have Been Resisting

This is what I have been resisting about the connected technology industry. Not any single product or company. The framing.

A smart refrigerator was a wild concept in 2005. A doorbell camera was novel in 2013. A voice assistant that could set a timer and play music was, for a moment, a glimpse of the future. But those ideas calcified. They became the ceiling instead of the floor. And the industry built walled gardens around them, not because walled gardens serve the user, but because walled gardens are more profitable to build, sell, and repackage.

The result is an ecosystem that forces you to choose, and not based on what experience you want. Based on what is easiest for them to connect. What is most profitable for them to bundle. What fits inside their schema. That is not experience driven by human need. That is experience driven by product architecture.

And the incentive structure does not change. Apple's business model requires that your home runs on HomeKit. Amazon's requires Alexa. Google's requires Assistant. None of them will build the coordination layer that makes their competitor's devices work as well as their own, because that coordination layer would undermine the lock-in that drives their revenue. This is not a problem that gets solved by waiting for incumbents to get around to it. The incentive runs in the opposite direction.

And what it does not allow for is the simple fact that I do not want the same experience every single day.

The Boombox and the Translation Headphones

Some days I want the boombox. I love the aesthetic. I love the feel of it. The deliberate, physical presence. The way it announces itself in a room. There is something about the act of putting on a record, or pressing play on a physical device, that serves a need no voice assistant can fill.

But I also love my translation headphones. And those two experiences have almost nothing in common except that they both involve audio and they both serve me. One is analog ritual. The other is invisible utility. And I want both, on my terms, without a platform telling me which one fits their ecosystem.

That is the problem. Connected ecosystems force you into a fixed relationship with technology. A single mode. A single interaction style. A single vendor's idea of what your life should feel like. And because the architecture was designed for device compatibility rather than human experience, it cannot accommodate the reality that we are not fixed objects. We are not content with the same experience over and over. While I may want my AI briefing at the beginning of every day, on occasion, I also want to do something else entirely.

I have been building Tethral because I want to share that conviction with anybody who needs it.

When the Screen Is No Longer the Surface

I have written elsewhere in this series about the design challenges that emerge when AI coordinates environments rather than displaying interfaces. What does it look like when the system works by disappearing? How do you measure the quality of something that produces no signal when it is working correctly? Those are questions about designing for humans inside AI-coordinated spaces.

But there is a related question that sits underneath all of them: what happens to interaction design when the primary surface is no longer a screen?

Voice was the first answer. And it was a good one. It freed interaction from the rectangle in your hand and put it in the room. But voice is still a command-and-response model. You speak. The system listens. It responds. The turn structure is inherited from conversation, and that is fine for a lot of things.

The BCI experience showed me something different. There was no turn structure. There was no command. There was intent, expressed through a biological signal, translated by a coordination layer into environmental response. The interaction did not feel like talking to a system. It felt like the room was paying attention.

That is where interaction design needs to go. Not away from voice. Voice is valuable and it is how Tethral's chief of staff works right now. But toward a world where voice is one option among many, where the ecosystem does not care which input method you use, and where the environment responds to your intent regardless of the channel through which you expressed it.

And there is a dimension to this that goes well beyond personal preference. For people who experience disability, who cannot use voice, who interact with the world through assistive devices, prosthetics, or alternative input methods, the question of which interaction modes an ecosystem supports is not a feature request. It is the difference between participation and exclusion. I am saving that for its own article because it deserves more than a paragraph. But I will say this much: the people solving that problem are building the interaction paradigms the rest of us will be using in five years.

What Comes Next

The next article in this series is about what happens when you build an ecosystem that allows anyone, with anything, to connect and navigate the systems around them in the way they live their life. Not the way a brand decided they should live it. Not the way a protocol committee standardized it. The way they actually move through the world.

For some people, that is a change of pace. A boombox on Tuesday, translation headphones on Wednesday.

For others, it is the difference between participating in connected technology and being excluded from it.

Both of those are reasons to build. And both of those are reasons the industry needs to think bigger than the screen, the speaker, and the app.

Next: What does it look like to biohack your home? And coming soon: why accessibility, biohacking, and BCI are not edge cases. They are the frontier of normal.

RELATED POSTS

.jpg)