.jpeg)

What Sound Does Software Make?

In graduate school I made the mistake of applying game theory to the mathematics of human communication. I say "mistake" affectionately, because it was one of those productive wrong turns that eventually leads somewhere more interesting than where you were originally headed.

The classical approach to formal pragmatics treats communication as a kind of negotiation. Two interlocutors, each with their own information and intentions, exchange signals according to rules that both parties implicitly understand. Game theory provides an elegant framework for this: communication as a coordination game where the players converge on shared meaning through iterated strategic interaction. It is beautiful mathematics. It accounts for implicature, for ambiguity resolution, for the way speakers convey more than they literally say. And it assumes, as its foundational premise, that the players arrive at the exchange with nothing.

No history. No expectations about each other. No prior experience that shapes how they interpret the signals they receive. Just two rational agents, starting from scratch, negotiating meaning through the formal structure of the game.

This is where the productive mistake happened. Because anyone who has ever had a conversation knows that this is not how communication works.

The context you carry in

You do not start a conversation from zero. You start from everything. From every conversation you have had before. From your expectations about who you are talking to, what they are likely to mean, what kind of exchange this is going to be. From your mood, your cultural context, your professional training, your experiences of how these kinds of conversations usually go. The signal is not processed in isolation. It is processed through the entire accumulated filter of your situatedness.

The game-theoretic approach to language is a wonderful exercise in what communication would look like if we were not ourselves when we did it. It strips away context to reveal structure, the way an anatomist strips away flesh to reveal bone. The structure is real. It is important. But it is not sufficient to explain what actually happens when two entities communicate, because what actually happens is saturated with context that the model deliberately excludes.

I spent years with this tension. The formal models were rigorous and the real phenomenon was messy, and the gap between them was where the interesting questions lived.

The subway, the jackhammer, and the question

Then I started thinking about machines.

Not about machine learning or artificial intelligence in the way those terms are used now, but about the more basic question of how machines communicate. Or rather, how machine intelligence communicates. And more specifically still, how disembodied machine intelligence communicates. Software.

Physical machines have a language that is immediately legible. Go down to the subway platform. Listen. The screech of metal on rail tells you the train is braking. The rhythm of the wheels tells you how fast it is moving. The whine of a motor under strain tells you it is working harder than it should. A jackhammer communicates its operation through vibration, through sound, through the physical consequences of its mechanical action. You do not need to read the jackhammer's source code to know what it is doing. Its body tells you.

What happens when the body is gone?

When a piece of software coordinates with another piece of software, there is no screech, no vibration, no whine under load. There is no body to communicate through. But the coordination still happens. And it still produces something analogous to sound, if you know how to listen.

The timing of a response is a signal. The pattern of retries is a signal. The shape of fanout, the acceleration of request frequency under pressure, the drift of latency distributions, the correlation of behavior across agents that share a dependency: all of this is the sound the machine makes. It is the language of coordination, expressed not through a physical body but through the behavioral patterns of interaction.

The zero-sum illusion

Here is where the game theory comes back, in a form I did not expect when I started.

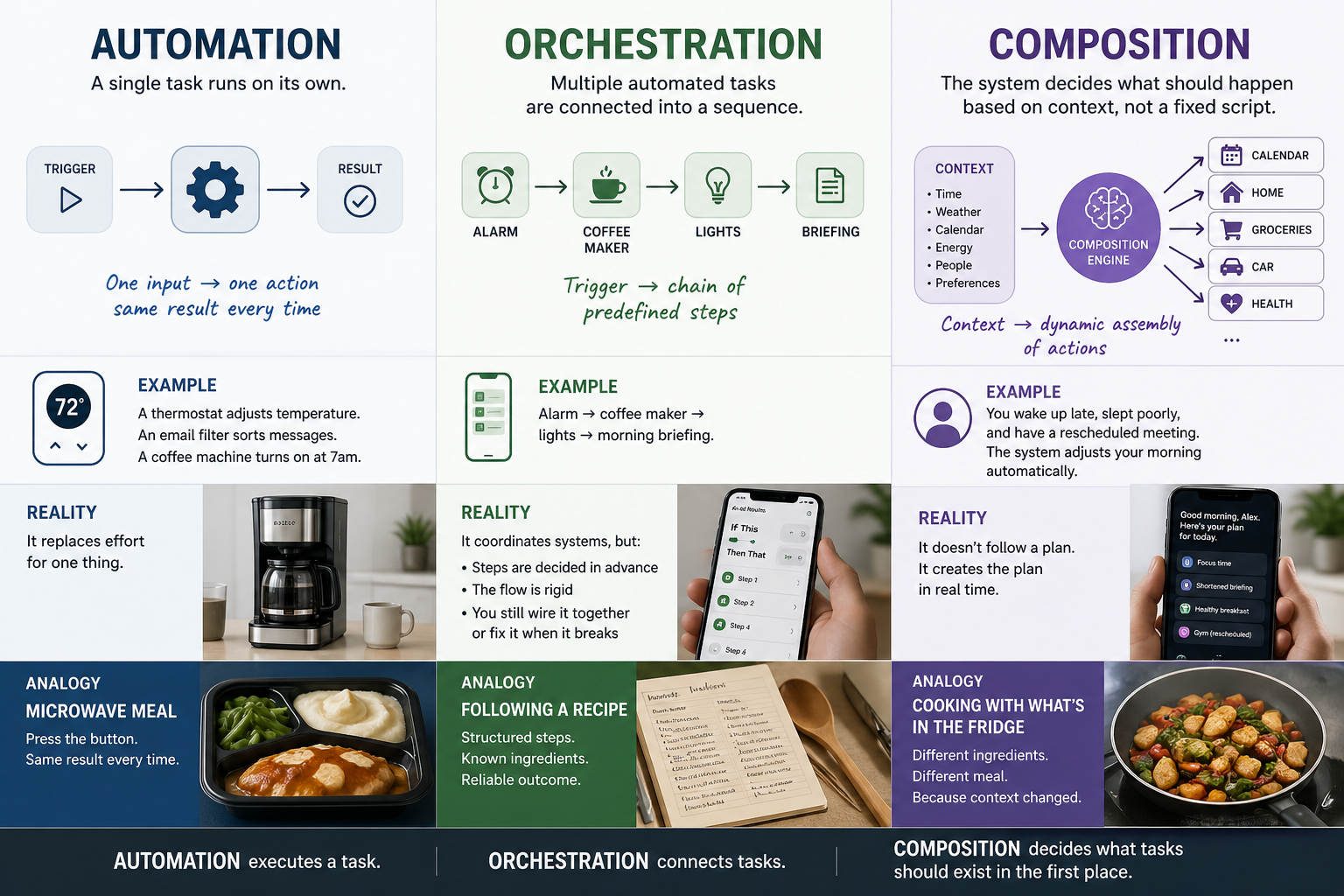

There is a persistent assumption in how we build and think about software systems. The assumption is that machines approach coordination the way classical game theory approaches communication: as a zero-sum negotiation between context-free agents. The machine has its instructions. It executes them. It does not bring anything of its own to the interaction. It does not carry the context of its making into the exchange. It arrives fresh, every time, and coordinates through the explicit rules it was given.

I have found this to be untrue.

Machines communicate their making. Both intentionally and not. A system built with aggressive retry logic communicates something about the environment its designers expected: an environment where failures are frequent, where persistence pays, where the cost of retrying is lower than the cost of giving up. A system built with conservative timeouts communicates a different expectation: an environment where latency matters, where waiting is expensive, where it is better to fail fast than to wait for a slow response.

These are not just configurations. They are orientations. They are the accumulated assumptions of the people who built the system, encoded into its behavior and carried into every interaction whether anyone intended that or not. The machine does not arrive at a conversation without a language. It arrives speaking fluently in the language of its coordination, a language shaped by every design decision, every architectural assumption, every environmental expectation that went into its making.

I am not claiming that machines have unconscious memories that shape their interactions, though the sass I occasionally receive from my LLM sparring partner might argue otherwise. I am claiming something more specific: that the behavioral patterns of machine coordination are not zero-sum, not context-free, and not devoid of meaning. They are rich with information about the situated perspective of each machine, and that information is available to anyone willing to listen to it.

What happens when you actually listen

If machine coordination is not zero-sum, if machines carry a language of coordination that is shaped by their making, then the behavioral signals they produce in interaction are not just operational telemetry. They are a kind of communication. And the question becomes: what do you do with a language once you recognize it?

In human communication, the answer to that question produced entire fields. Linguistics. Pragmatics. Discourse analysis. Rhetoric. The recognition that communication is situated, contextual, and meaning-laden, not merely the exchange of context-free signals, transformed how we understand human interaction.

We have not had that transformation for machines. We still treat machine coordination as a problem of logic: correct routing, correct retry policies, correct timeout configurations. We treat the behavioral signals as noise or, at best, as diagnostic data. We do not treat them as language.

But they are language. And the moment you treat them as language, the design of coordination infrastructure changes fundamentally. You are no longer building traffic management. You are building something closer to a translator, a system that listens to the behavioral language each machine speaks, understands the situated perspective it encodes, and mediates between perspectives that are about to collide.

This is not how the infrastructure industry currently thinks about coordination. It is, however, where the thinking has to go if we want systems that actually work when composed across the boundaries of different organizations, different design philosophies, different environmental assumptions, and different expectations about the world.

The machines are already talking. The question is whether we build something that understands what they are saying.

John Lunsford is the CEO and founder of Tethral, and the inventor of TriST, a geometric coordination protocol for agentic AI systems. His PhD research at MIT and Oxford focused on autonomous system-to-society adoption, with particular attention to how systems communicate their embedded assumptions through behavior. He writes about the intersection of machine behavior, coordination, and infrastructure.

RELATED POSTS

.jpg)